This week’s post is a guest post by Liz Richardson who is a senior specialist in evaluation at Natural England. As per usual when I have guest post, Liz wrote the words and I just drew the comics

As an evaluator I talk a lot about outcomes, what they are, how to word them, what they are not! Terminology can be a daily hurdle as the confusion around outputs, outcomes and impacts is very genuine. But then along come Benefits and their need to be realised. I now feel I am on the other side of the terminology fence. How does this fit in with evaluation and how are they different from outputs and outcomes? I am going to attempt to unravel this here.

Where I learned about the term Benefits Realisation.

This is something that has been more prominent in the UK since 2003, but I have become more aware of benefits through my job working on priority projects and they are a requirement alongside evaluation as part of a project management process. My experience is working with teams who are trying to define project benefits alongside outputs and outcomes and, as we evaluators are collaborators, this has led me to exploring this further and trying to bring both processes closer together.

Why it’s important.

Before we get on to what the difference is let’s think about why this is important to help us understand if our project or programme is achieving its aims and making a positive change. Having two separate processes is unhelpful for project staff, so we need to bring both these things together in a meaningful way so one supports and informs the other, reduces the workload for teams and meets our learning and accountability needs. Evaluation and benefits realisation are both crucial aspects of project management, but they serve different purposes and are conducted at different stages of a project.

How do we define benefits realisation and evaluation?

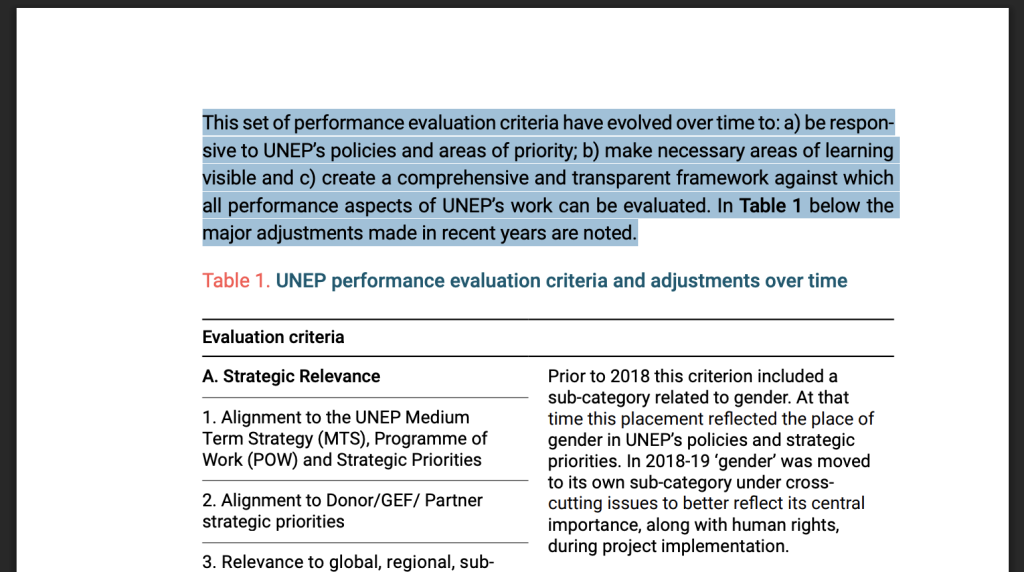

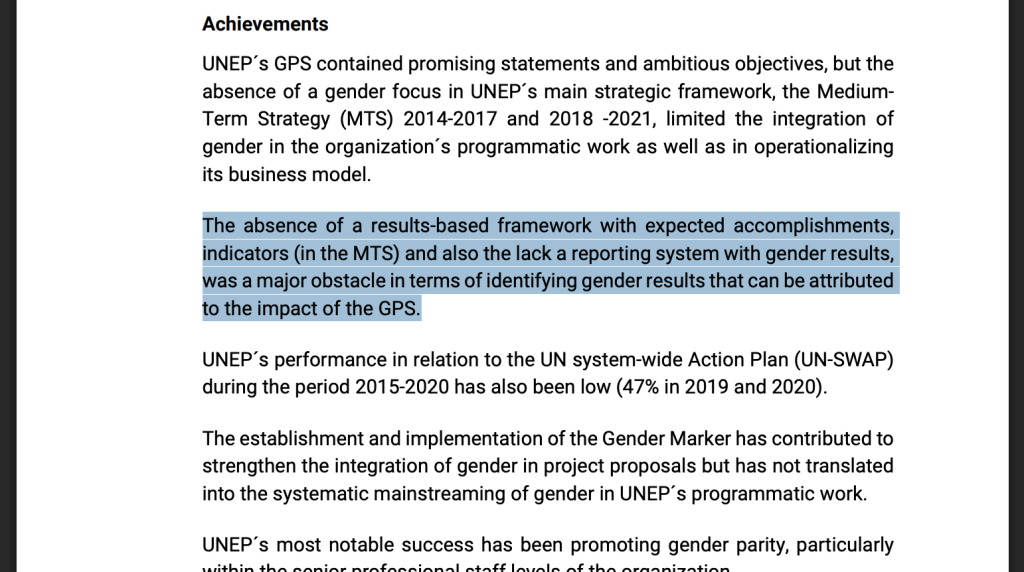

Let’s look at the definitions for each of them. Evaluation draws on a range of approaches and methodologies to assess a project’s outputs, outcomes and impacts and uses a range of methods to collect and analyse data. As part of evaluation, we consider attribution and contribution and the extent to which the delivery meets these criteria. Outcomes focus on changes in knowledge, skills, behaviours and attitudes. We want to know how and why a project is effective and when it isn’t. So, hopefully we all agree with that.

A Benefit is a positive measurable improvement resulting from an outcome that is perceived as an advantage by an organisation. Benefits management identifies, plans and tracks benefits through to realisation which is the practice of ensuring that benefits are derived from outputs and outcomes using KPIs, timelines and milestones.

What are some parallels between benefits and outcomes?

That seems reasonably clear, but it still feels like there is some overlap here. Could a benefit be an impact or long term outcome? While I expect there is room for overlap perhaps the difference lies in the focus. Benefits feel very specific, tangible, have a value and can be monitored whereas a long term outcome is a broader change that leads to impact and impact is something that a project is contributing towards rather than achieving solely on its own.

What some of the differences?

So now we can perhaps begin to see where some of the differences lie. Evaluation is using methods and approaches to ‘test’ theories and answer questions and is interested in what worked well and less well and why as well as the extent to which the outcomes are a result of the intervention. Benefits management is ensuring that the planned benefits deliver value for the organisation and often focus on what they are worth. This highlights different audiences, outcomes for beneficiaries and benefits for organisations or funders, although there will be overlaps here.

Here is an example of evaluation and benefits realisation in action.

Let’s explore this using an example of a Workplace Wellbeing programme aiming to improve mental and physical health of employees.

For the Evaluation outcomes would include, reduced stress, improved mental well being and physical health. We would want to know if the programme has been effective and what has worked well and not so well? The evaluation would want to understand if the changes are a result of the programme or whether anything else may have caused this e.g. a change in work practices implemented at the same time or activities outside of work. The methods used would take this into account.

Benefits realisation would focus on ensuring that the benefits defined at the start of the program are achieved and aligned with strategic goals. Benefits might include reduced absenteeism and employee retention thus supporting the organisational strategic aims around employee satisfaction and higher productivity. These benefits could be monitored and tracked and would be a result of the outcomes.

The key here is if these benefits are not realised it is the evaluation that will tell us why. I now feel that Benefits could have a place in a Theory of Change, or I am certainly getting closer to bringing evaluation and benefits realisation together.

Be good to get people feedback on if this rings true.