I’ve been writing for a long while about how Todoist is my brain. I’ve been using it since 2014 and every year it has gotten better and better at doing what I love: manage my tasks.

However, up until recently, I felt that was all it could do, and I kept looking for another system to better manage projects. I used Notion for a time, sharing four Notion templates for how I used it to track my research pipeline, student thesis projects, summer goals, and course prep notes. I used Notion for about six months before moving away from it. It was complicated, cumbersome, and just more hassle than benefit for me personally, although I still recommend it for folks interested in really powerful workspace platforms.

Next, I tried ClickUp. I was so excited to try it out and use it. I thought it would be the answer! And for many people it is, but it was similarly more than I needed and after 3 months of being all-in with trying it out, I went back to Todoist yet again.

These days, I am now using Todoist as my project management system. Does it have all the bells and whistles of other project management systems? Probably not. But does it do everything I need to do in an intuitive, easy to navigate fashion? Totally!

It’s particularly when they implemented the boards view that I fell in love with Todoist for the thousandth time. With Kanban style boards, which was really just another organizational scheme they implemented, I finally felt like I could do what a lot of other folks were using with other systems. And the first place I used it with was my research pipeline.

I’ve seen people use white boards, Excel spreadsheets, post-it notes, Trello boards, Notion, and so much more to track their research pipeline. And, in fact, I’ve tried them all too! But they would last only a few months before I forgot about them or just grew annoyed with them. That’s because they couldn’t integrate into my regular habits well. Sure, I could have done more to make them work, but I’d rather create systems that work for me than force myself into new systems that may not work.

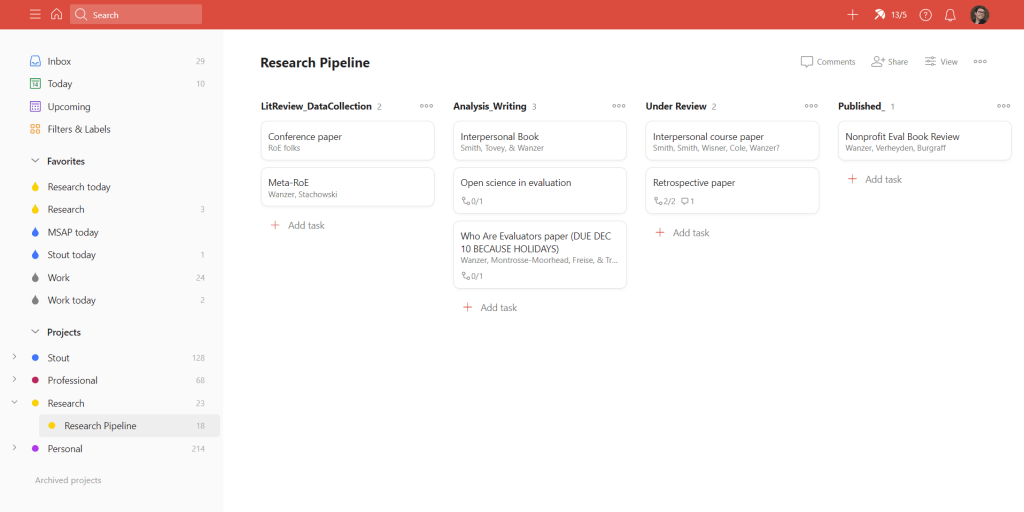

Here’s how I setup my research pipeline in Todoist. First, I created a unique project called “Research Pipeline” which I treat separately from my research project tasks (note the larger project called “Research”).

Second, within that project I have four sections which I have personally chosen to be LitReview/Data Collection, Analysis/Writing, Under Review, and Published. Not shown here is a fifth section called Ideas that I jot down notes for future potential projects. Note that Todoist doesn’t like characters in section names which is why there are underscores, but you can play around with the names that work best for you.

Third, under “View” I choose to View as Board and Group by Default (which is sections). Then you get the view I have above!

Lastly, each research project or paper is added as an uncompletable task. Note that there are no circles to “complete” tasks because those get put in the Published category to celebrate my accomplishments! Although don’t look too closely there because many of my published articles aren’t there right now because I implemented this system after publishing most of my current articles

I have been using the Description field to put the journal or author information. I have been using sub-tasks and comments to organize some broad thoughts about the papers or projects that aren’t really specific tasks.

I then use the Research project (see the left menu for my projects list) to organize my tasks themselves. I choose to have tasks in the project on Todoist called Research with Labels for which research project they are part of. I then view as a board but group by labels. I could also organize them by sections and it would do a similar thing.