This blog post is a modified segment of my dissertation, done under the supervision of Dr. Tiffany Berry at Claremont Graduate University. You can read the full dissertation on the Open Science Framework here. The rest of the blog posts in this series on my dissertation are linked below:

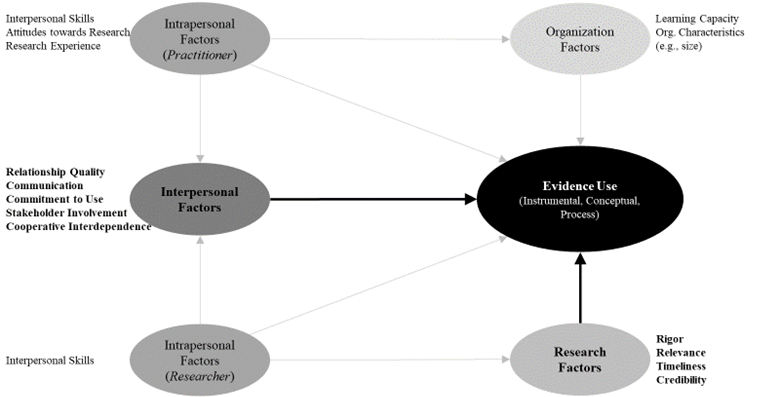

- Factors that promote use: A conceptual framework

- Defining evidence use

- Overview of my dissertation study: sample, recruitment, & measures

- Question 1: To what extent are interpersonal and research factors related to use?

- Question 2: To what extent do interpersonal factors relate to use beyond research factors?

- Question 3: How do researchers and evaluators differ in use, interpersonal factors, and research factors?

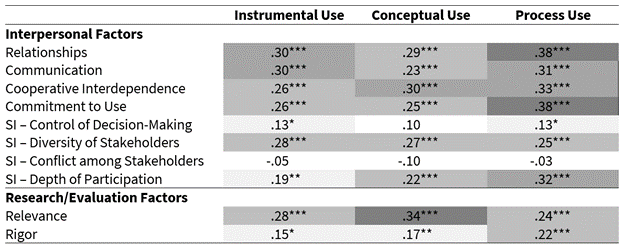

To answer this research question, I examined correlations between self-reported interpersonal (i.e., relationships, communication, cooperative interdependence, commitment to use, stakeholder involvement) and research/evaluation factors (i.e., relevance, rigor) with use (i.e., instrumental, conceptual, and process use). These correlations are shown in the figure below.

Overall, all of the interpersonal factors except for two items in the stakeholder involvement scale were moderately correlated with use, with correlations ranging from r = .19 to r = .38. Both research relevance and rigor were correlated with use, albeit relevance (rs between .24-.34) was more strongly correlated with instrumental and conceptual use than was rigor (rs between .15-.22).

These findings support two prior hypotheses I had coming into this research study:

- Interpersonal factors are perhaps more important for process use than for instrumental and conceptual use.

- Relevance is more strongly correlated with use than rigor, although this was only found for instrumental and conceptual use.

I also examined some demographic factors to see how they related to use. Partnerships that have been together longer had greater instrumental (r = .20), conceptual (r = .20), and process (r = .25) use, and also reported greater rigor (r = .16) and less conflict among partners (r = -.20). Partnerships with more members reported greater instrumental (r = .14) and conceptual (r = .15) use, lower quality relationships (r = -.18), lower commitment to use (r = -.17), and less conflict among partners (r = -.20). Interestingly, those reporting using an RCT reported higher levels of process use (d = .47), instrumental use (d = .39), and conceptual use (d = .45).

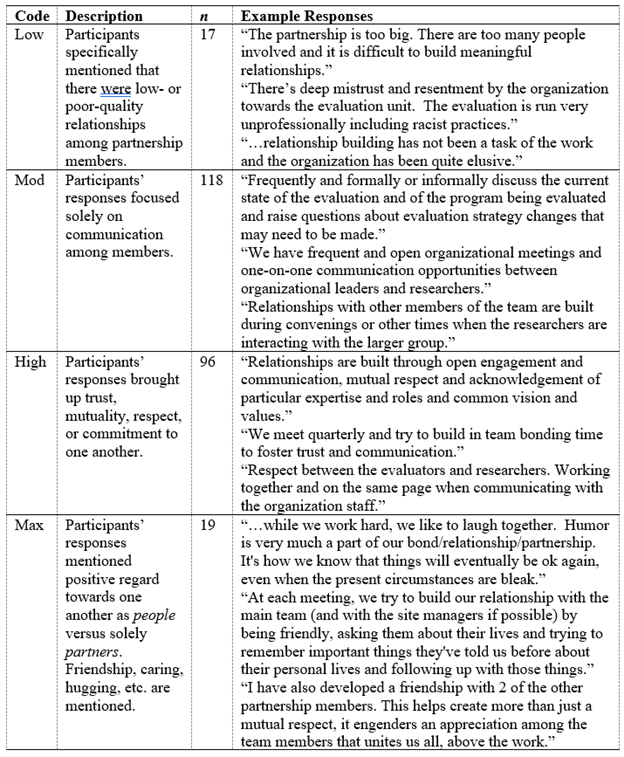

Lastly, I asked participants to respond to an open-ended question about the quality of relationships among members. These responses were coded into four levels of relationship quality, which you can see in the table below. Although not statistically significant, there was a slight increase in use across the four levels of relationship quality.