Muy relevante post en su blog “Agilefacile” del gurú de la gestión del conocimiento y la facilitación, pero sobre todo excelente persona, Ewen LeBorgne, sobre la “Facilitación en línea: Una visión meta de los recursos para trabajar y facilitar a distancia más efectivamente” (“A meta look at resources to work and facilitate online more effectively“)

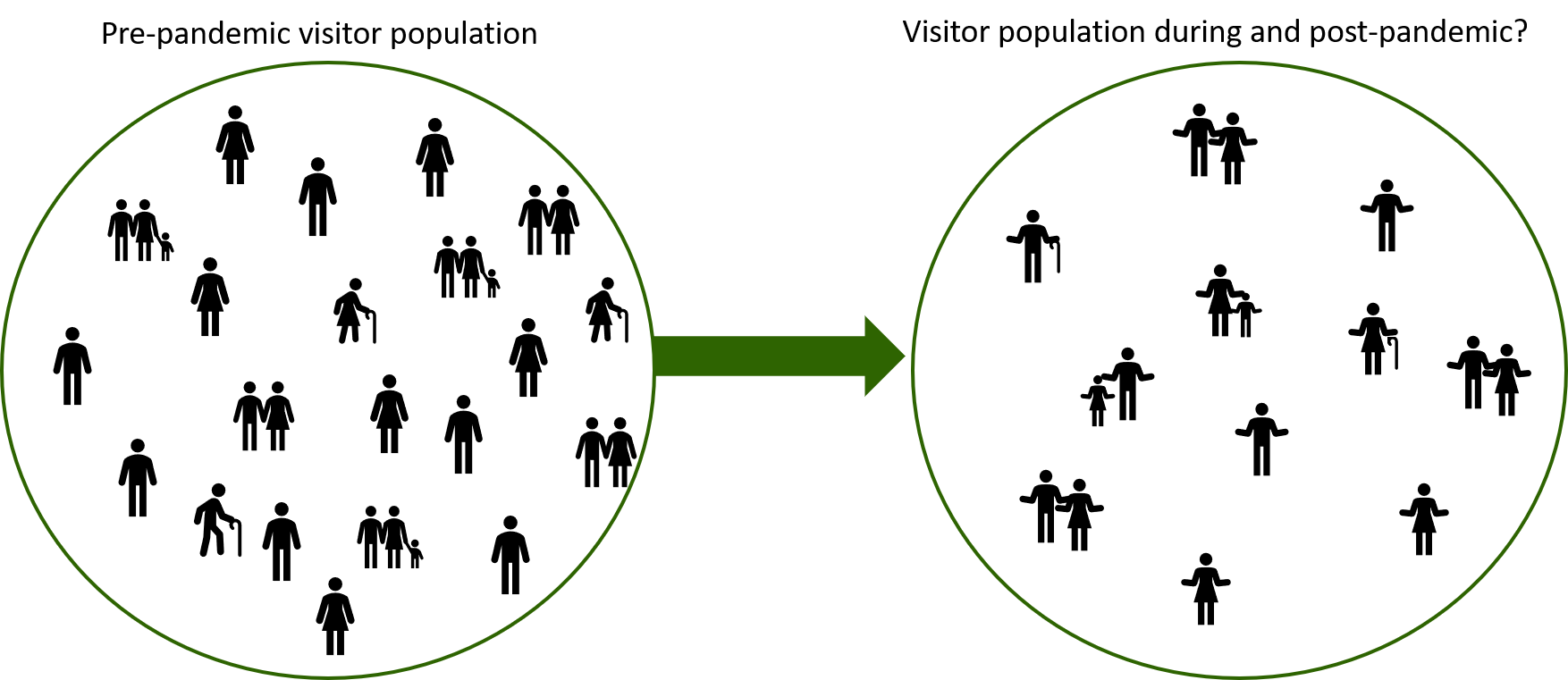

De forma muy resumida nos indica que la facilitación en línea en realidad sigue muchos principios de facilitación cara a cara. Sin embargo debemos tener en cuenta algunos elementos prácticos, logísticos, de diseño y emocionales:

- Necesitamos conversaciones sincrónicas (al mismo tiempo) o asincrónicas (en momentos diferentes), según la distribución geográfica.

- La mejor división del tiempo.

- Cómo romper el hielo o ‘leer las emociones de las personas”

Da varias fuentes, pero a modo de recomendación estrella recientemente se ha diseñado el “Kit de herramientas de recursos para reuniones en línea para facilitadores” durante la pandemia de coronavirus, gracias al “Grupo de facilitadores para la respuesta a la pandemia” donde podemos profundizar sobre conceptos relacionados básicos y avanzados: “mudarse al completo” al trabajo en línea, equipos virtuales, e incluso sobre artefactos de facilitadores para reuniones de zoom durante la respuesta al Covid19. Facilitemos la facilitación a distancia en estos tiempos inciertos