Tamara Hamai and I have been sowing the seeds for our new program, Evidence for Engagement, for months. Our partnership happened so organically – a meeting of the minds for two evaluators who have experience with and a passion for organizations that serve youth and families. We’d been toying with the best way to support the organizations that we serve and help them use evaluation to improve their access to funding and the children and families they serve.

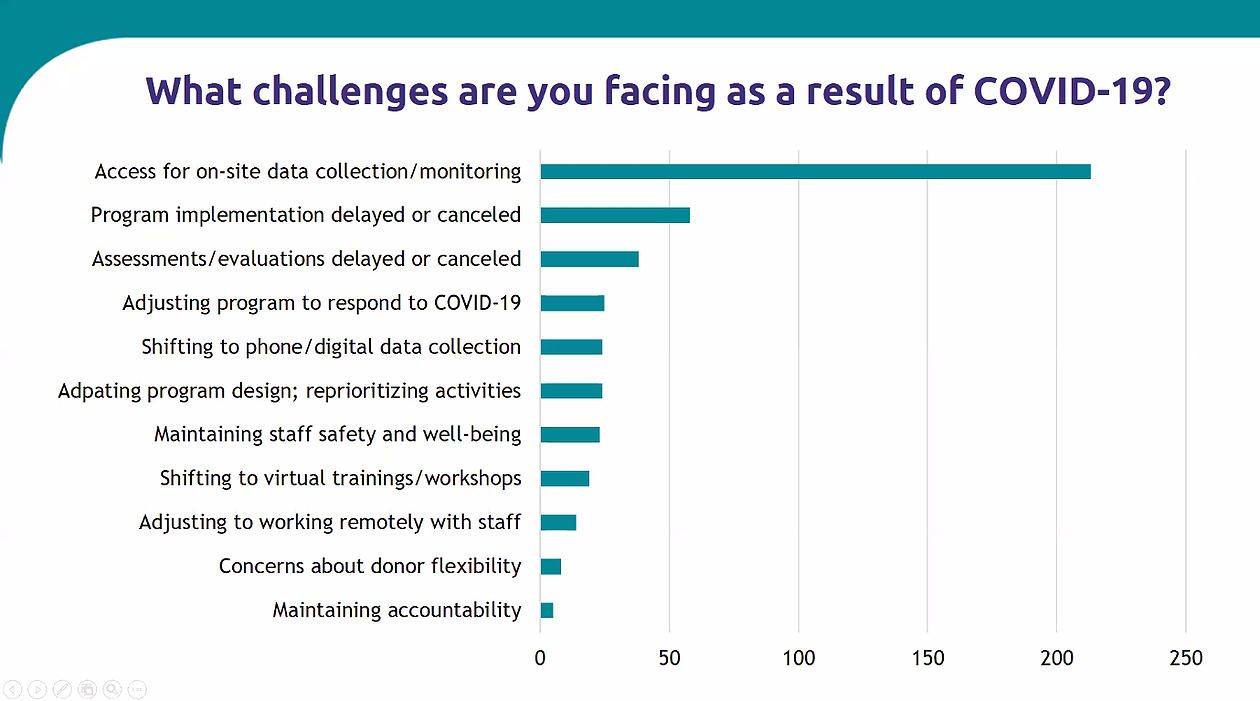

Then COVID hit.

The pandemic has caused all of us to pause and re-evaluate how our work fits into a very new, very different reality. Tamara and I know that small organizations, especially those who work in schools, are struggling right now. Their access to the people they serve has been essentially cut off. We realized that organizations may need our help even more than before.

Our solution: We’re running a totally free, three-week email series that will help small youth- and family-serving organizations build their evidence base (which is required under the Every Student Succeeds Act for any organization receiving federal education funds). Through videos, worksheets, frameworks, and success stories, Tamara and I will walk participants through the process of becoming evidence-based organizations and help them see this as an opportunity, not a burden.

The goal: We want to help vital, community-based organizations plan for the future, open themselves up to new opportunities, and become more sustainably funded. We’re hoping that this opportunity will help them better serve youth and families, not only during this difficult period of time, but also for a long time afterward.

For us, this is also about equity. We know that for many community-based, minority-owned organizations, budgeting for evaluation is out of the question. We also know that these grass-roots organizations are having a profound impact on their communities — and that their communities need all the support we can give. We’re hoping that we can get more small, local organizations approved as evidence-based programs in their districts and begin to level the playing field.

If you think this program will benefit you and your organization, sign up! If you know of someone else who could use this support, encourage them to join. Feel free to share this link widely: bit.ly/evidence4engagement