Esta es la Declaración de la plataforma BetterEvaluation frente al COVID-19 (Abril de 2020)

La pandemia de COVID-19 está transformando rápidamente nuestro mundo. Las personas, las comunidades y las organizaciones enfrentan enormes desafíos e incertidumbres. La crisis climática y los desastres naturales sin precedentes han puesto en tensión nuestros recursos limitados. Estos desafíos globales ponen en riesgo los Objetivos de Desarrollo Sostenible y amenazan el bienestar de las personas y el planeta. En BetterEvaluation creen que tienen un papel importante que desempeñar para responder a esta situación en desarrollo.

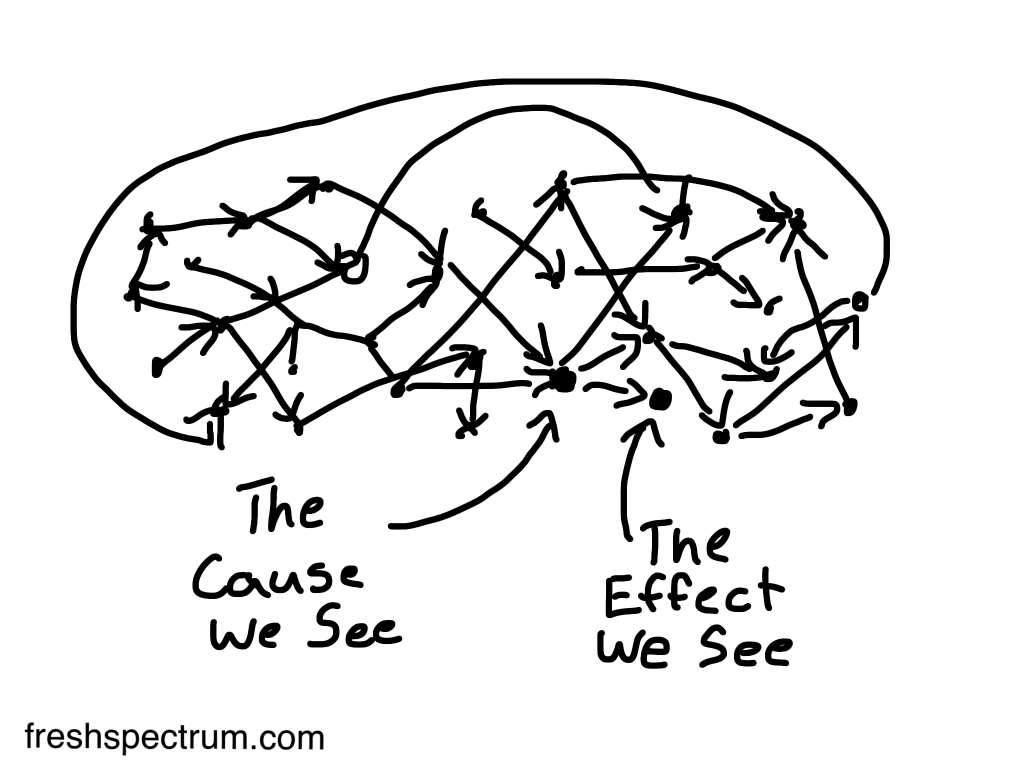

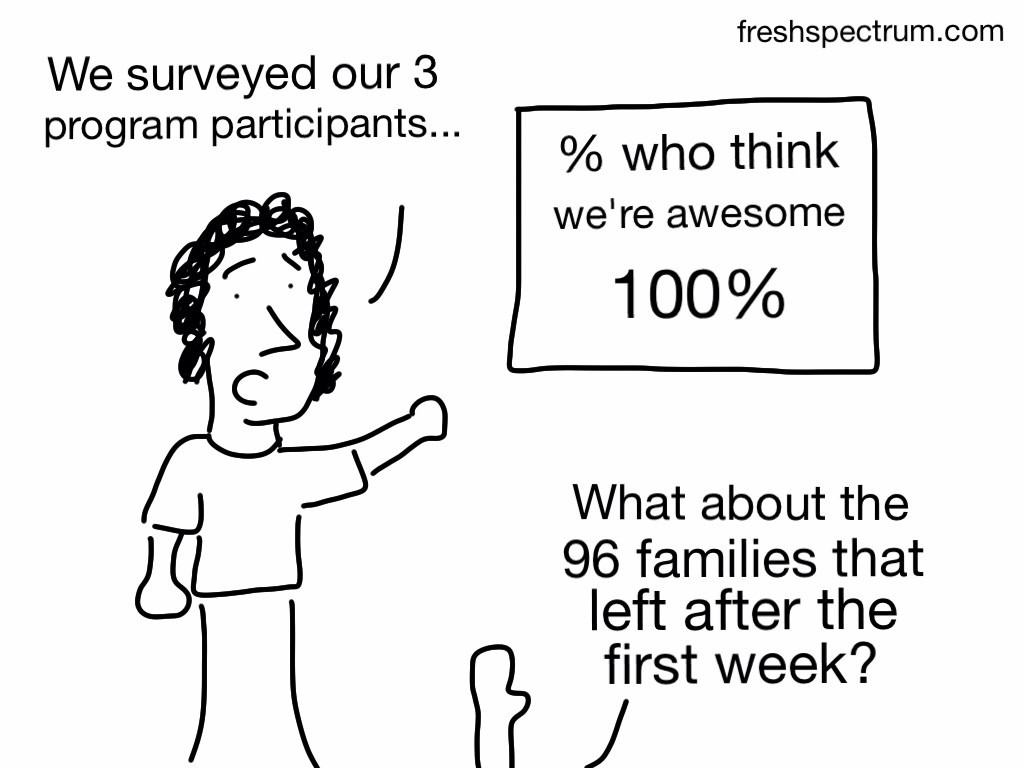

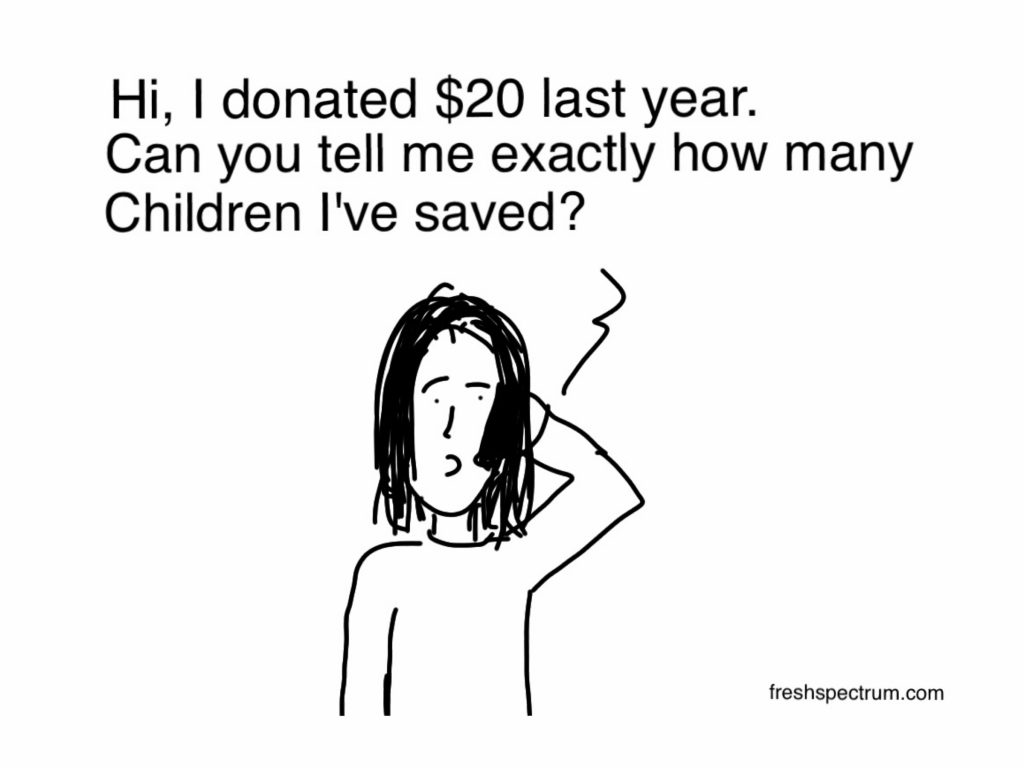

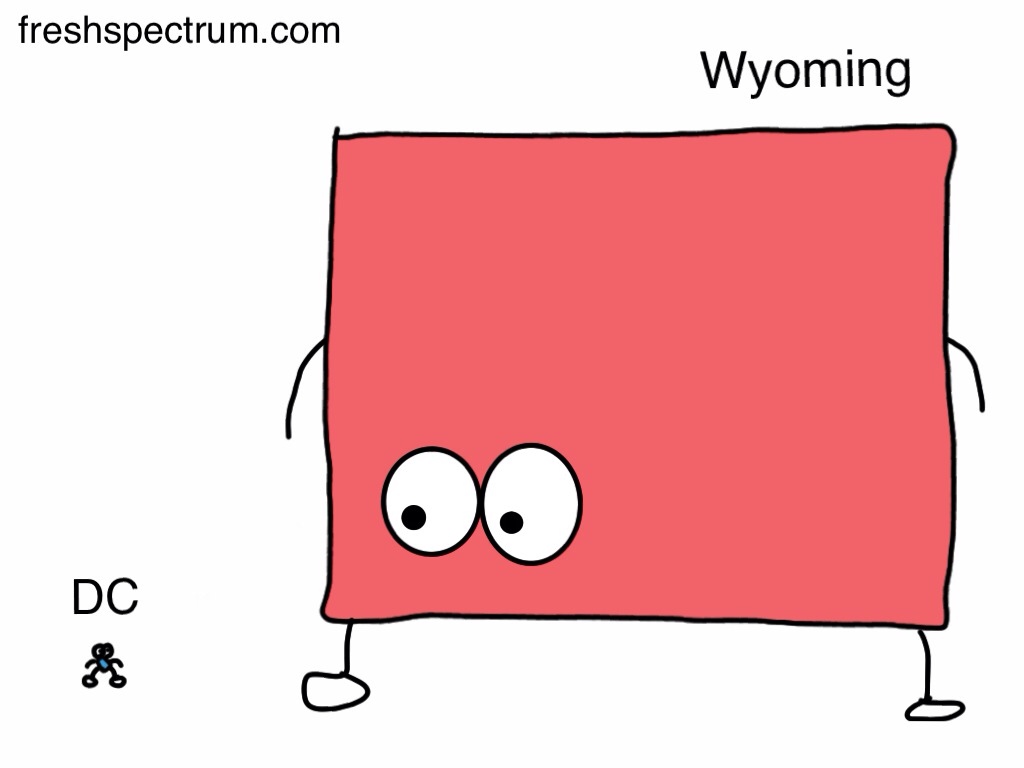

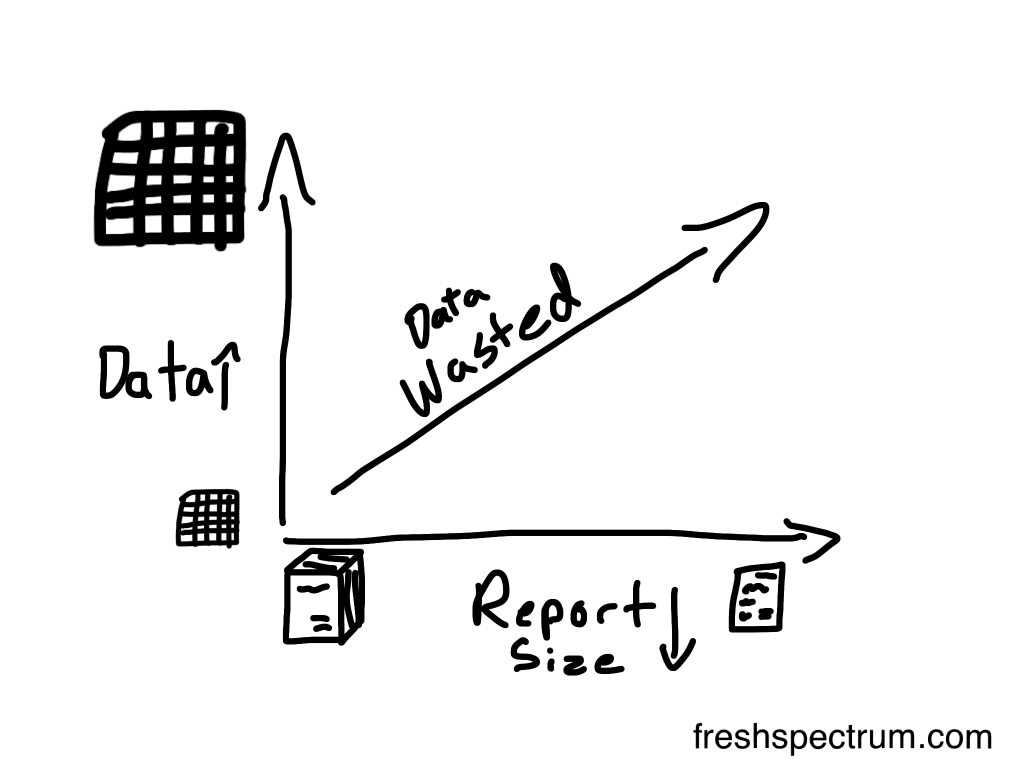

Trabajamos para apoyar una mejor evaluación a nivel mundial. Una buena evaluación ayuda a las personas a identificar la información que necesitan y darle sentido. Ayuda a informar las decisiones sobre qué hacer y cómo mejorar los resultados. Una buena evaluación es esencial para guiar el mejor uso de los recursos y para garantizar la rendición de cuentas y el aprendizaje. Durante esta pandemia y en el mundo posterior a la pandemia, nuestro trabajo es más importante que nunca.

Así es como están respondiendo:

1 Trabajando de forma remota para garantizar la seguridad de nuestro personal y la comunidad.

2 Finalizando las mejoras de la experiencia de usuario en el sitio web para que sea más fácil encontrar información relevante

• Creando y seleccionando contenido adicional para abordar el contexto actual, que incluye:

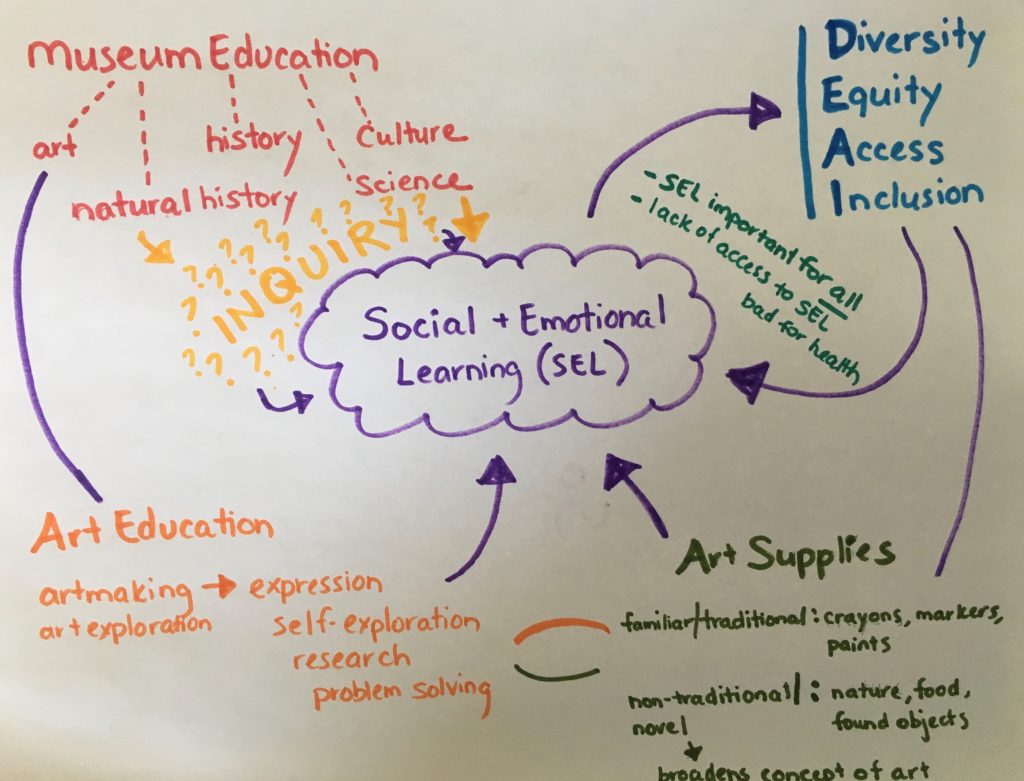

• evaluación en tiempo real

• evaluación para la gestión adaptativa

• abordar la equidad en la evaluación

• evaluación de rendición de cuentas y asignación de recursos

• formas de hacer la evaluación dentro de las limitaciones de distanciamiento físico

• formas de trabajar eficazmente en línea

• recursos relacionados con la evaluación en la pandemia de COVID19

3 Asociaciones existentes y los proyectos de fortalecimiento de capacidades, adaptándonos según sea necesario.

4 Explorando formas de contribuir a los esfuerzos específicos para abordar estos desafíos a nivel local y global.

BetterEvaluation continuará trabajando en colaboración para crear, compartir y apoyar el uso del conocimiento sobre la evaluación.

-Basados en las experiencias de nuestra junta, personal y socios en el fortalecimiento de capacidades en crisis de salud y desastres naturales.

-Firmemente comprometidos a utilizar las fortalezas en la evaluación para construir un mundo mejor, particularmente para los grupos marginados y los más afectados por las crisis actuales.