Today’s post started as a comic request and turned into a Q&A.

Here is the question that came to me from Randi Knox.

I’m looking for a comic to communicate the difference between program monitoring vs program evaluation. I didn’t see anything specific to this in your existing materials. I was wondering if you’d be open to making a comic for this purpose?

It’s certainly a topic that I haven’t fully delved into, but I did think of one comic from a couple of years ago.

But I think the question is a good one, and I wanted a little more inspiration. So I asked Randi a couple of follow-ups. Here is what she said.

I’m a relatively new internal evaluator in a department that recently rediscovered the joys of evaluation. I feel fortunate to work with a team of folks who are eager to evaluate, but I also get the sense that ‘evaluation’ is a loaded word for many team members. Some tend to call everything ‘evaluation’ and assume all data collection is for ‘evaluation,’ when this is not necessarily the case.

In considering how to create a shared understanding among team members, I thought it could be helpful to adopt the term monitoring as a less threatening, helpful, and natural part of program implementation and management. I also expect differentiating monitoring and evaluation could help decrease evaluation anxiety. So now I’m challenged to clarify what I mean by each of these terms.

Here are the comics the conversation inspired.

“Some tend to call everything ‘evaluation’ and assume all data collection is for ‘evaluation,’ when this is not necessarily the case.”

“I also expect differentiating monitoring and evaluation could help decrease evaluation anxiety.”

“So now I’m challenged to clarify what I mean by each of these terms.”

I did a bit of internet searching in the hope of finding a really good explanation of the differences. But what I found was all a little bit too jargony to be useful.

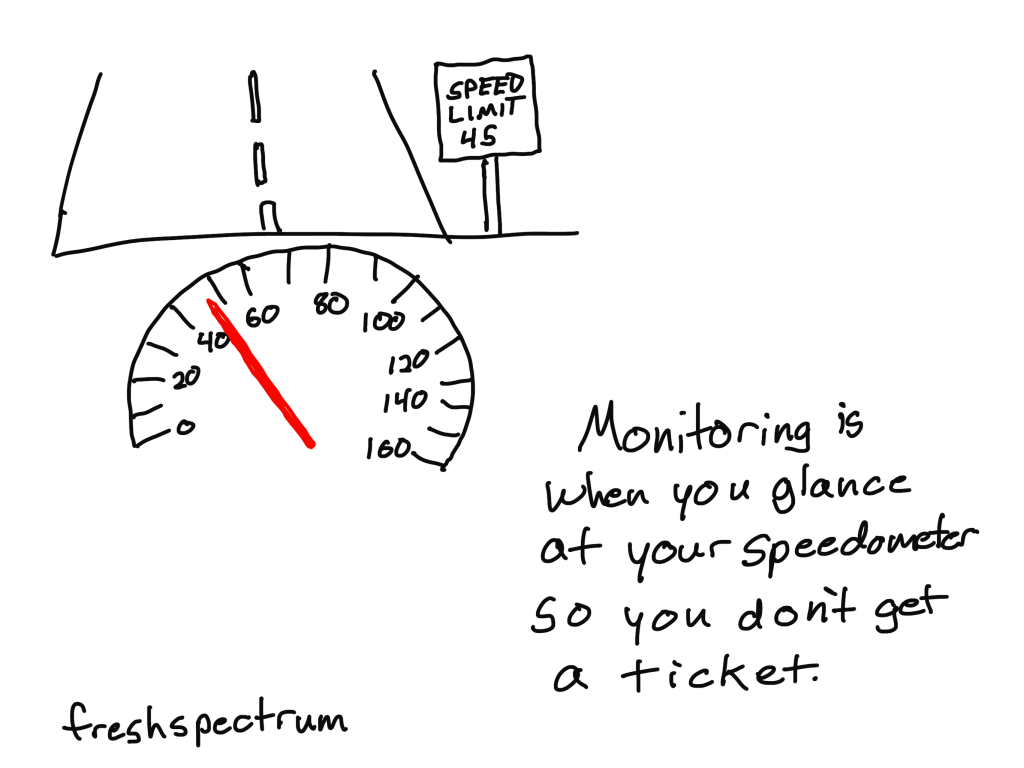

I focused on monitoring because I already have a fair number of comics designed to define evaluation. In these kinds of situations I usually fall back to metaphor. What could be fitting, or silly enough, to communicate the definition of measurement. You’ll find the following two as attempts to fit that description.

Here is a speedometer metaphor.

And this one is the silly one

Do you have a good way of describing the differences between monitoring and evaluation?

I don’t think I’ve cracked this one yet, so I would love to hear it. Let me know in the comments.

Randi Knox is a Supervisor of Research Evaluation & Program Management at Boys Town National Research Hospital in Omaha, Nebraska. If you want to connect with Randi, you can find her on LinkedIn.