If you are an evaluator, or someone interested in learning more about evaluation, you might have experienced the frustration of searching for evaluation-related content online. The word evaluation is used in so many different contexts and industries, that it can be hard to filter out the noise and find the information you’re looking for.

The term ‘evaluation’ is a common denominator in numerous fields. From education to healthcare, from technology to arts, ‘evaluation’ is a universal process of assessing, measuring, and judging. It’s a critical component of decision-making, improvement, and advancement in any industry. In education, we evaluate students’ performance. In healthcare, we evaluate patients’ health conditions. In technology, we evaluate software performance. In arts, we evaluate the aesthetic appeal of a piece. The list goes on. The omnipresence of ‘evaluation’ in our vocabulary is a testament to its importance, but it also creates a significant challenge when searching for specific ‘evaluation’ content online.

I recently started following #evaluation on some social media accounts. Here’s some of the content I get that’s not at all about the professional development or learning opportunities I’d hoped for:

The problem gets even worse if you try to do some job searching. Lots of people have “evaluation” of something in their job description. Case in point:

And if you happen to be an evaluation consultant looking for RFPs for evaluation contracts it can be nearly impossible. Nearly every RFP in every field makes mention of the “RFP evaluation process”, thus wiping out your keyword search term in one fell swoop!

How can we improve the searchability of evaluation content?

As evaluators, we can do our part to improve the searchability of evaluation content online. Here are some suggestions:

-

Use specific and descriptive keywords when creating or sharing evaluation content, such as “program evaluation”, or “outcome evaluation”

-

Use hashtags, tags, or categories to label and organize evaluation content on social media platforms, blogs, or websites

-

Join and follow online communities, networks, or groups that are dedicated to evaluation such as your local Evaluation Association

-

Subscribe to newsletters, podcasts, or blogs that feature evaluation content, (hint: have you signed up for our Newsletter? Scroll to the bottom of this page to sign-up!)

-

Attend webinars and workshops by evaluation experts

-

Share and recommend evaluation content that you find useful, interesting, or relevant with your colleagues, friends, or followers

-

Follow your favourite evaluators on social media!

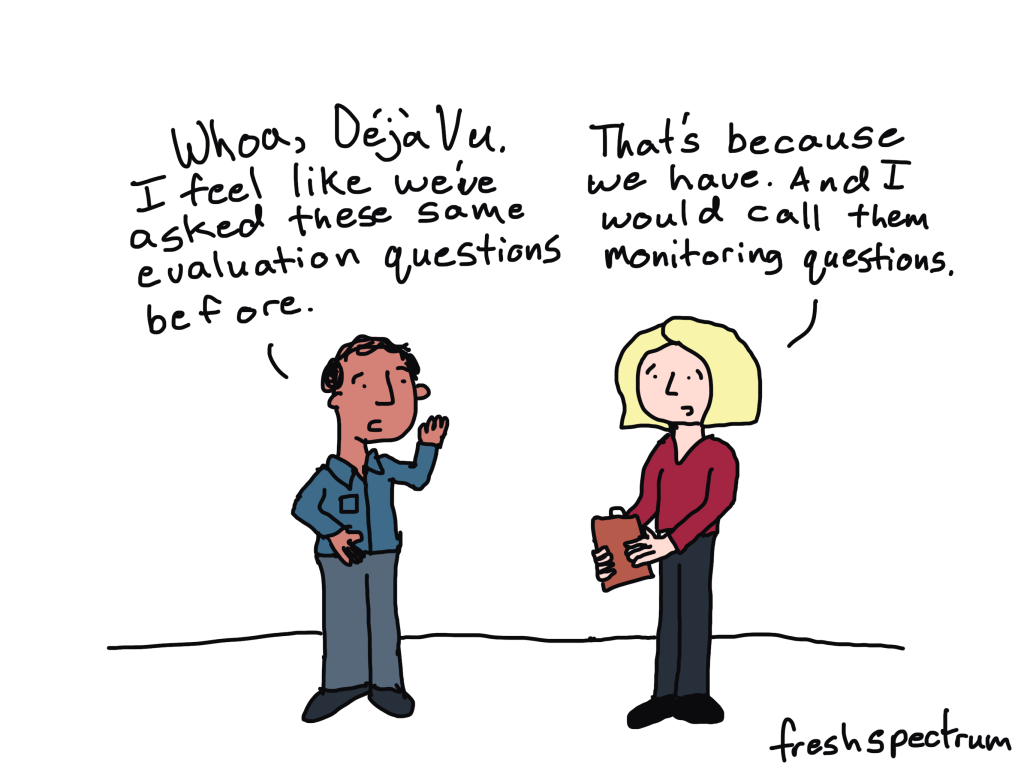

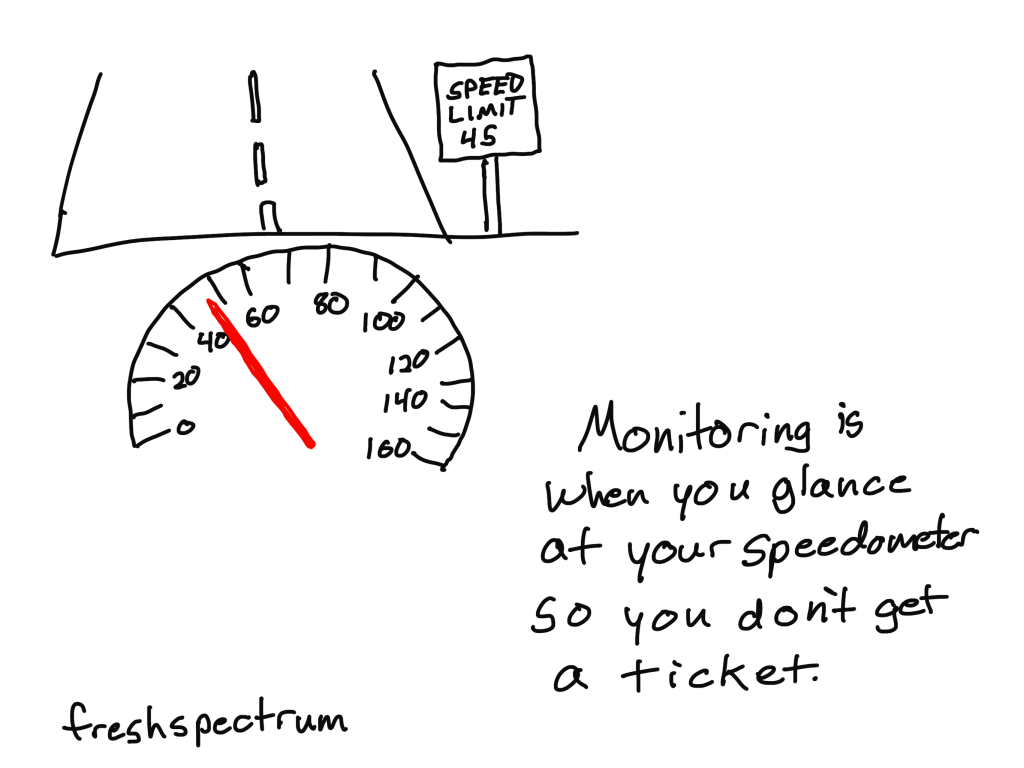

I’ve spent a bit of time in my career explaining to clients that evaluation is not the same as research, and yet sometimes searching for “research” content may generate better results than “evaluation” content. It seems unfair!

As more evaluation associations offer credentialing and add to the professionalization of our world, it may become easier to find evaluation. More universities and colleges are offering programs directly in evaluation, which further adds credibility to the role and field. I heard somewhere that evaluation is the fastest growing field that no one has heard of. Perhaps as evaluation moves more into the spotlight, searching for content will be easier.