This article is rated as:

As we celebrate National Career Development Month, some of us may be reflecting on our professional journeys and the choices that have shaped our paths. For those considering a shift in their career trajectory, pursuing a master’s degree in health evaluation could be a meaningful step; at least, it was for me. To give you some context, prior to pursuing a graduate degree, my experience was primarily in clinical research. I focused on conducting patient interviews, collecting data, navigating ethics approvals, training research personnel, and ensuring compliance with research protocols. Working in academia, I realized my passion for improving existing initiatives and supporting associated staff with their desired goals and impact. Ultimately, I recognized my desire for a job that prioritized actionable and meaningful change in the community. By chance (or maybe fate), I was introduced to the health evaluation program, saw how it aligned with my goals and haven’t looked back since.

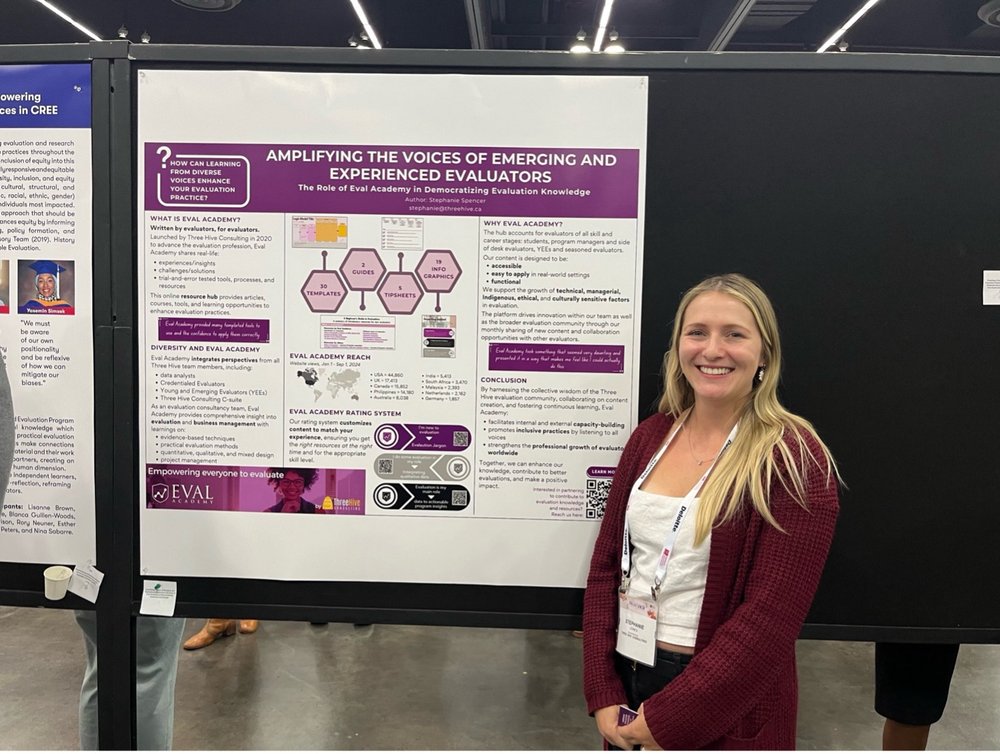

I’m currently completing my practicum at Three Hive Consulting, and in this article, I’ll share my personal experience and explore five key ways this degree has equipped me for a successful career pivot to evaluation. From broadening my analytical skills to expanding my professional network, these insights show how targeted education and a practicum helped me navigate the evolving landscape of my professional career.

1. Shared Language

In the Program Evaluation Foundations course in my Master’s, we learned about evaluation questions, logic models, evaluability assessments, utilization-focused evaluations, and more – not only understanding what they are but also identifying the qualities that make them suitable for a project’s specific needs. The basis of good communication relies on a mutual understanding of what the other person is trying to convey, and I found that having this terminology at my fingertips helped me familiarize myself with a project’s objectives and strategy with ease. More specifically, my practicum equipped me with the tools during onboarding to understand evaluation plans and workplans even without any direct involvement in the planning stages. Shared language enabled clear communication without any misunderstandings, efficiency in conveying complex information without needing extensive explanation, and helped me integrate into regular workflow that much easier.

2. Effective Tools and Sound Techniques

Expanding my quantitative and qualitative skillsets was essential for enhancing my technical competencies in evaluation. While I felt my research methodologies foundation was quite strong due to my background in clinical research, I was surprised by the intricacies of designing reliable and valid data collection tools. Even when it comes to conducting a survey, the technical aspects can be quite complex. Although there isn’t a definitive gold standard for surveys, there are certainly inappropriate methods and pitfalls to avoid. The technical knowledge I gained through my formal education informed task completion and guided my execution process and workflow, helping me feel confident in my quality of work. During my practicum, this came into play as I reviewed surveys, applying my understanding of content validity, scaling items, and avoiding response biases. I offered recommendations and clarifications to ensure a well thought out survey would be sent to the project team for review. Learning about effective tools and sound techniques that lead to the desired answers is important and has personally benefitted my practice and the tasks I come across.

3. Addressing Common Challenges

One aspect I appreciate about my program is the opportunity to learn from the experiences of practicing evaluators, gaining insights from their challenges without having to face those challenges myself. While it’s essential to have the skills needed to perform well in your job, issues are inevitable, and understanding how other evaluators have successfully navigated similar challenges is equally important. For instance, I had never fully considered the impact that political, data, or time constraints could have on an evaluation. Questions such as “How can we navigate existing data constraints while maximizing the validity of our findings?” and “How can we effectively engage stakeholders with varying levels of power and authority to ensure a socially responsible evaluation?” have become relevant considerations in my understanding of evaluation. Learning what can be done in these circumstances or what could have been done to mitigate these situations has been enlightening. These insights and preemptive teaching strategies are what I found beneficial for future evaluation work. As I pursue a career in evaluation, having strategies to reference and prepare for potential obstacles gives me a reassuring sense of security.

4. Hands-on Experience in a Supportive Educational Setting

To me, proactive learning is all about practicing what I am learning. In this case, my practice started in a low-stakes environment where it was acceptable to make mistakes, and I received immediate feedback that encouraged growth and enriched my learning process. I had the opportunity to create deliverables at various stages of an evaluation, from responding to requests for proposals and developing logic models to writing process and outcome evaluations. Our program valued experiential learning, which supported my critical thinking in an evaluative context. This exposure allowed me to understand the processes and considerations necessary for real-world evaluation activities, so when it came time to actually carrying out these responsibilities, it wasn’t the first time I encountered such an intimidating task.

5. Practicum Experience

Although, I’m only halfway through my practicum, I can share my reflections and learnings thus far. While I’m confident we’re all familiar with the benefits of field experience – such as real-world application, skill development and mentorship – there’s a unique advantage to learning from an evaluation consultancy. Onboarding to multiple projects at various stages of evaluation, each with established timelines, is challenging, and I have found it essential to adapt and absorb as much as possible.

While I have prior experience with tasks like conducting interviews, developing surveys, analyzing data, and report writing individually, managing all these responsibilities simultaneously while aligning my focus with the various project goals is challenging. However, this has been a valuable opportunity to utilize all my skills at once within a short timeframe. I’ve gained insights into the field of evaluation from a consulting company lens, working on a wide range of local community programs and provincially established government initiatives. Contributing to program evaluation has provided me with a clearer understanding of how evaluation fits into my career aspirations and how I will fit into the evolving landscape of my professional journey.

Pursuing a graduate degree in health evaluation has enhanced my specialized knowledge by providing a comprehensive understanding of research methodologies, analytical techniques, and evidence-based practices essential for assessing health programs and interventions. Exposure to real-world case studies and collaborative projects allowed me to apply theoretical concepts to practical situations. Additionally, my program has fostered a nuanced understanding of health systems, preparing me to contribute meaningfully to evaluation practices. My graduate school experience has been a positive one, and I believe that it has given me the tools to navigate this change early in my professional career.