This article is rated as:

I just finished reading a book called Factfulness, by the late Hans Rosling, a Swedish physician and statistician who dedicated his life to promoting a more accurate and optimistic view of the world. In his book, he identifies ten common cognitive biases that distort our perception of reality and prevent us from seeing the progress and potential of humanity. He also offers practical tips on how to overcome these biases and adopt a fact-based mindset that can help us make better decisions and communicate more effectively. This was a pleasure-read book that I didn’t think had strong applications to evaluation, but by the time I finished, I couldn’t stop thinking about what these ideas meant for evaluation reporting.

As evaluators, we are constantly dealing with data, evidence, and complex problems that require critical thinking and sound judgment. We also encounter data and projects that can trigger our emotional reactions and cognitive shortcuts. I have always been interested in cognitive biases, of which there are countless versions, and how they cloud what we think is independent, objective decision-making. Rosling has a top ten, that he calls instincts, so let’s start with those and think about how we can apply the insights from Factfulness to evaluation practice.

Bias #1: The Gap Instinct

The gap instinct is the tendency to divide the world into two distinct and often conflicting groups, such as the rich and the poor, or us and them. This instinct can lead us to overlook similarities between groups and the diversity within them. It also leads us to exaggerate the differences and gaps between the two groups. Rosling suggests that we should look for the majority or the middle, rather than focusing on the extremes and the averages.

Application to evaluation: Have you ever used a Likert scale on a survey and dichotomized the responses in your reporting? For example, “75% agree and 25% disagree” or “92% were satisfied but 8% were dissatisfied”. The Gap Instinct suggests that this is possibly (or likely?) a misrepresentation, where, in reality, many of your respondents were probably somewhere in the middle, with significant overlap between the agree-ers and disagree-ers.

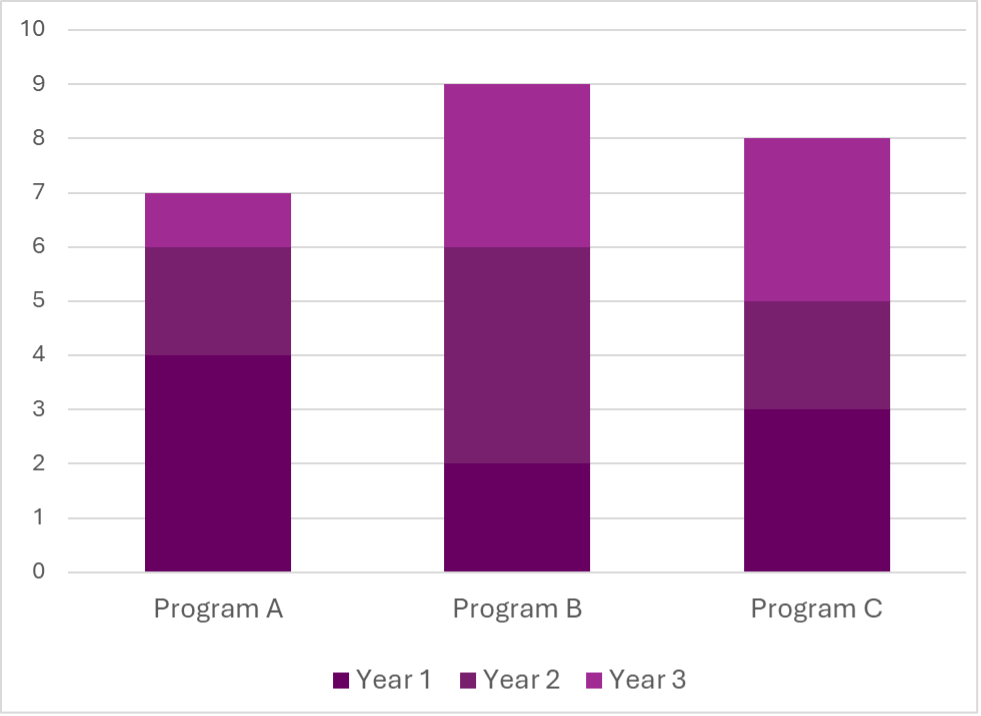

The Gap Instinct also applies when we compare two (or more) averages. For example, if you report “On average, Program A has 100 participants a year, and Program B has 200 participants a year” it can lead to misunderstanding and exaggeration of the differences in the two programs, when in reality, any given session of either program may have significant overlap in the number of attendees.

Accurate reporting is representative reporting, including ambivalence or indifference, and including ranges, medians or modes if they better represent reality.

Bias #2: The Negativity Instinct

The negativity instinct is the tendency to notice and remember the bad more than the good, and to assume that things are getting worse. This instinct can make us pessimistic, cynical, and fatalistic, and blind us to the positive changes and opportunities that are happening in the world. Rosling suggests that we should balance our negative impressions with positive facts and recognize that bad and good can coexist and that improvement is possible.

Application to evaluation: I recently wrote a report where my client said, “It reads as very critical of us.” I was surprised. That wasn’t my intent, nor did I think the data pointed to being overly critical. Sure, there was some room for change and improvement, but I didn’t think the key takeaway was criticism. After reading Factfulness, I now think this reaction was the Negativity Instinct in action. My client was focusing on the bad more than the good.

We’ve written before about how to present bad results. I do think it is our role to share negative and unexpected findings, but I think in the future I’ll be more cautious of this bias to focus on the negative. That doesn’t mean we need to hide bad results in overly flowery or optimistic language, but I also don’t want clients to focus only on the negative.

An overarching theme of Factfulness is that things can have room for improvement but also be improving at the same time or co-exist with good things.

Bias #3: The Straight Line Instinct

The straight line instinct is the tendency to assume that a trend will continue in a straight line, without considering the factors that might affect its direction, speed, or shape. This instinct can make us overconfident, complacent, or fearful, and lead us to make inaccurate predictions and projections. Rosling suggests that we should look for curves, bends, and levels, and remember that most trends are S-shaped, not linear. To illustrate, Rosling uses the example of global population forecasting:

Application to evaluation: I think there is a potential evaluation application anytime we present data over time. I know I often look at line chart trajectories and assume they will continue without pausing to reflect on a potential plateau or factors that may influence that trajectory, (e.g., seasonality).

My time working in quality improvement taught me that six points on a timeline indicate a non-random pattern. Simple rules of thumb like this can help in evaluation so that we are not over (or under) emphasizing trajectories.

Bias #4: The Fear Instinct

The fear instinct is the tendency to pay more attention to things that are scary, dangerous, or threatening, and to overestimate their probability and impact. This instinct can make us anxious, paranoid, and irrational, and prevent us from taking reasonable risks and opportunities. Rosling suggests that we should distinguish between risks and fears and calibrate our level of worry to the actual level of harm.

Application to evaluation: I think this applies to the decision making and action that we encourage our clients to take after an evaluation. I think we can encourage our clients to think about likelihood of and exposure to certain scenarios. Project management tools that rate risk levels and likelihood are probably helpful here.

Bias #5: The Size Instinct

The size instinct is the tendency to focus on the size or quantity of something, without considering its proportion, perspective, or relevance. This instinct can make us impressed, amazed, or alarmed, and lead us to misinterpret or misuse numbers and statistics. Rosling suggests that we should compare, divide, and contextualize, and use ratios, proportions, and comparisons to make sense of numbers.

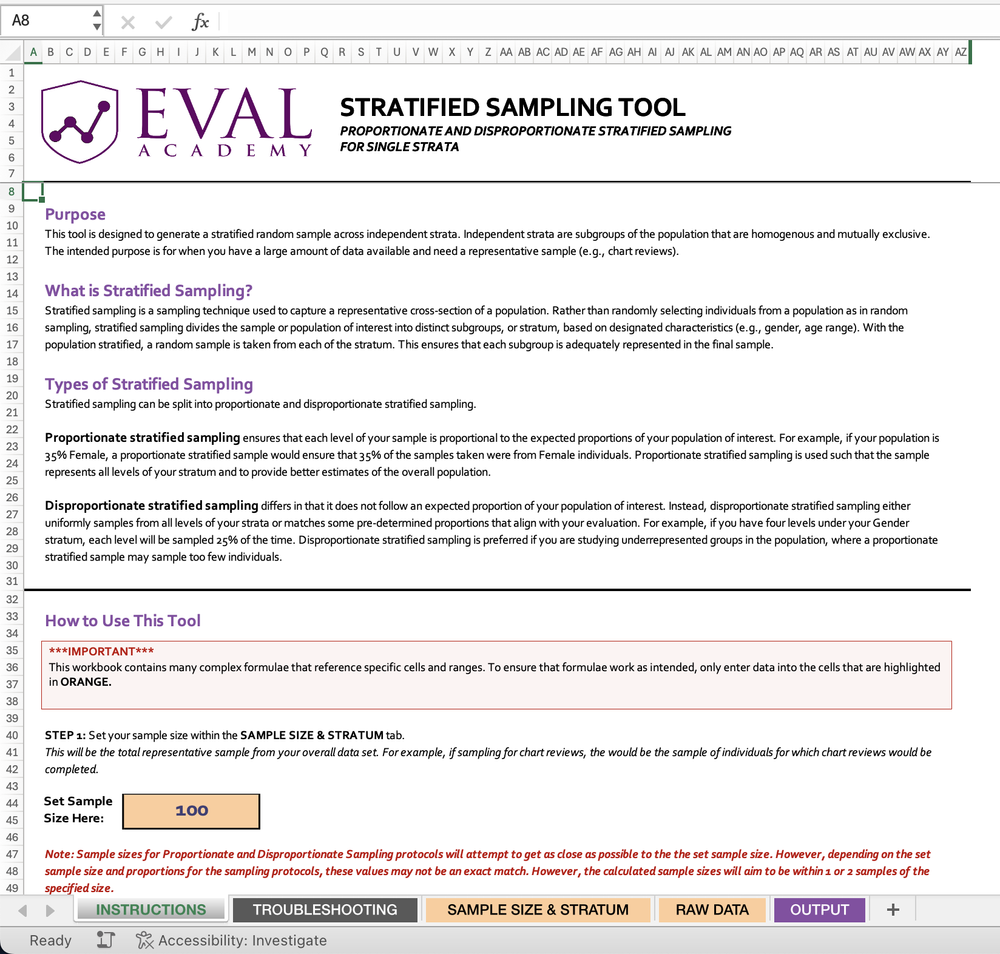

Application to evaluation: Have you ever seen the recommendation to report a single number? It’s literally on the Quantitative Chart Chooser I have stuck to my wall. This approach to data visualization is purported to make your message memorable and sticky. Rosling, however, suggests it can be misleading without context.

If you report “90% of participants loved the program”, how does your audience know if it was 97% of last year? Or that 90% is actually only representing 10 participants that completed surveys, not the 73 total participants. Of course there are solutions, one being to ensure your data analysis itself isn’t misleading, like overweighting small sample sizes. Reporting a single big number may be appropriate in evaluation if your audience has all the necessary context, including the denominator or any key differences over time or between groups.

Another application to evaluation is when you report lists. Reporting a large list gives the impression that every item on the list is equally weighted, but in reality it’s likely that a smaller proportion have the greatest impact. For example, if you have a list of influencing factors on a specific outcome, it is likely that there are just a handful that have the greatest influence. In Factfulness, Rosling uses the example of pharmaceutical commercials that list off a dozen side effects, ranging from itchy feet to heart failure. By listing them all, the audience struggles to apply appropriate weight and meaning to each item on the list and can end up ignoring them all, leading to poor outcomes through inaction.

Bias #6: The Generalization Instinct

The generalization instinct is the tendency to categorize and label things, people, and places, and to assume that they are homogeneous, static, and typical. This instinct can make us stereotypical, prejudiced, and ignorant, and prevent us from seeing the diversity, complexity, and uniqueness of reality. Rosling suggests that we should question our categories, look for differences and changes, and beware of the majority illusion.

Application to evaluation: The key here is to look for differences. It is unlikely that your program participants are homogenous in all regards. What makes them different? And how can you understand those differences? Solutions may be about the demographic or characteristic questions you collect, or about how you stratify and analyze your data. Though some clients may not explicitly ask for a gender-based analysis or exploration into participant characteristics, these deeper dives in analysis may add valuable insights to your reporting.

Bias #7: The Destiny Instinct

The destiny instinct is the tendency to believe that things are predetermined by nature, culture, or history, and that they cannot or should not change. This instinct can make us fatalistic, resigned, or resistant, and stop us from recognizing the potential and agency of ourselves and others. Rosling suggests that we should keep track of gradual changes, acknowledge the power of human intervention, and celebrate the progress that has been made.

Application to evaluation: For me this comes into play when making recommendations. It’s likely I’ve omitted recommendations because they seem unlikely to change, perhaps governed, in my biased view, by nature, culture, or history. Even small changes can accumulate over time to big changes; perhaps, as evaluators, our recommendations don’t all have to be system-level change, or program restructuring change, but can leave the door open for small changes that slowly shift the way things are done.

Bias #8: The Single Perspective Instinct

The single perspective instinct is the tendency to adopt a single idea, discipline, or framework, and to apply it to everything, without considering other perspectives, dimensions, or angles. This instinct can make us dogmatic, narrow-minded, or biased, and limit our understanding and creativity. Rosling suggests that we should use multiple perspectives, tools, and methods, and seek out different viewpoints and sources of information.

Application to evaluation: For me, the application here is in evaluation methodology. This is the “if you have a hammer, everything is a nail” adage. I think it’s easy to get stuck in ruts as evaluators and rely on the trusted surveys, interviews and focus groups without stopping to think if other data collection strategies might be more effective. Here are a few suggestions: World Cafés, Photovoice, Outcome Harvesting, or Arts-Based Data Collection.

Bias #9: The Blame Instinct

The blame instinct is the tendency to look for a scapegoat, a villain, or a hero, and to attribute the cause or solution of a problem to a single individual, group, or factor. This instinct can make us angry, judgmental, or naive, and distract us from the systemic and structural causes and solutions of complex problems. Rosling suggests that we should resist pointing fingers; look for causes, not villains; and look for systems, not heroes.

Application to evaluation: I have definitely fallen into this trap. I worked on a project that wasn’t implemented well and didn’t produce the outcomes it had aimed for. From my arms-length, contracted evaluator position it looked obvious to me that poor communication was a likely culprit. Sure, poor communication was a key factor, but the risk here is that I had found my thing-to-blame and stopped looking for other answers or solutions. Evaluations should be comprehensive and offer multiple perspectives.

Bias #10: The Urgency Instinct

The urgency instinct is the tendency to act quickly and impulsively, without taking the time to gather evidence, analyze data, or think critically. This instinct can make us stressed, panicked, or reckless, and lead us to make hasty and poor decisions. Rosling suggests that we should take a breath and insist on data.

Application to evaluation: The application to evaluation here may take the form of how we encourage our clients to take action with a level head, but I think there is another application. Rosling says you should always insist on data. As evaluators, we can ensure we have triangulated our data with appropriate perspectives and sample sizes to ensure that our clients are given a fulsome story on which to base their decisions.

It’s been on my to-do list for some time to write about biases in evaluation, but reading this book finally motivated me to get started. What are some biases that you keep top of mind? I’d love more direction on how evaluators can work against our biases in practice.

For more info on Rosling and his family’s work to present an accurate worldview check out https://www.gapminder.org/ and https://www.gapminder.org/dollar-street.