Remember how I said you couldn’t add alt text in Canva? Well, you can now!

Transcript

Hey, so you may already know that I am a huge fan of Canva. And I use it for all sorts of things from presentations to infographic design. And last year they came out with a web design tool. Which is great. Except that one thing. There was absolutely no way. To add alternative text. Meaning. You couldn’t add any kind of descriptions. You couldn’t change the header structure, all sorts of things that you would need to do.

To make a web design accessible.

And this is really important. In general. But especially if you work on government kind of projects, It’s essentially a killer. You can’t. Do the work, if you can’t make it accessible. Well, I was playing around with Canva the other day and I clicked on an image. And what do you, what did I find? I found a little button.

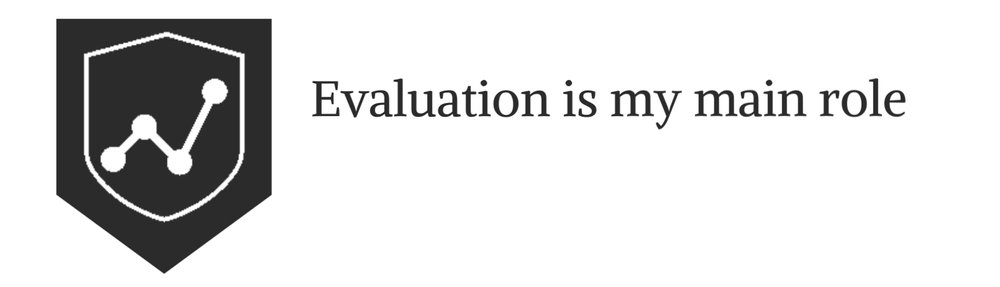

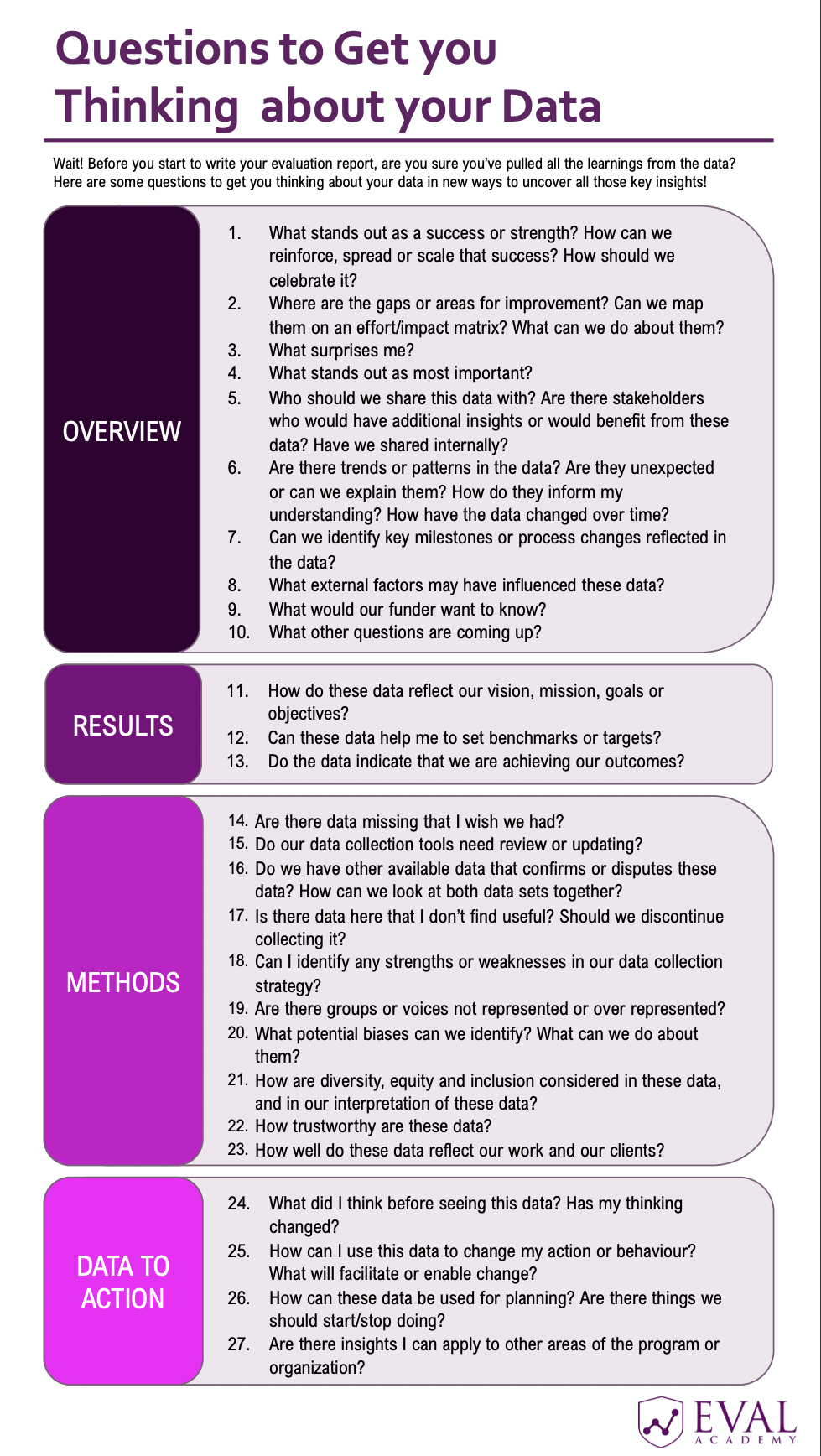

That says alternative text. Yes. Now Canva at least has the bare minimum of what you need to create. Something that is accessible to share online. ? Just to show you here’s a picture up here somewhere. Of. Website, I was creating just a little simple test website I created in Canva. And then put in a picture.

Now if you right. Click on the picture, you’ll see on the menu. You’ll see a little alternative text button. And here let’s zoom in. Okay. Now, if you go ahead and click on that. A box will pop up. ? And you have up to 250 characters of alternative texts that you can add in this box. You can also click the little button to say that it’s decorative and it doesn’t really have a meaning other than to add decoration to the page.

?So that’s it. Those were really standard requirements. Now, if you’re doing kind of serious design work it’s still, probably better to work with WordPress or PDFs. If you need to do some good accessibility work, there are things you can’t do in Canva still because you can’t really adjust underlying HTML code. You can’t change like the header structure.

In a way you can’t mark down H one and H. and paragraph markings. It’s getting there. I think it’ll be there. Eventually. There is some reordering elements. But this is they’re coming. Along and it’s a long way from where it was before. ? And here it actually even past. A test. I went ahead and put it into an accessibility checker.

And yeah, it’s the bare minimum pass, but it Passed. And that’s something I can say before. ?So there you go. That’s it. Canva has all text. So rejoice and hopefully they’ll continue to improve accessibility and we’ll be able to use it more often in the future. All right. That’s set for today. Have a great one. I’ll talk to you soon. Bye.