[…] this IRB 101 series, I have provided general context for IRBs, including what IRBs are and why they exist and potential risks to research participants to illustrate IRBs’ purpose. This post will help […]

allblogs

IRB 101: What types of human subjects research are exempt from IRB?

In this IRB 101 series, I have provided general context for IRBs, including what IRBs are and why they exist and potential risks to research participants to illustrate IRBs’ purpose. This post will help you determine—if indeed you are conducting human subjects research—whether it is exempt from IRB review.

What are exemptions?

The Office of Human Research Protections (OHRP) within the Department of Health and Human Services (HHS) provides federal regulations for human subjects research. One part of the regulation known as Subpart A, or the Common Rule, identifies exemption categories for human subjects research. OHRP updated the Common Rule in 2018. OHRP now identifies eight categories of human subjects research that are considered exempt from the Department of Health and Human Services’ regulatory requirements. All eight categories are found here, described technically.

Exemptions applicable to visitor studies research and evaluation

Interpreting the technical language of the eight exemption categories can prove confusing. Here, I describe (in as plain language as possible) the four exemptions categories that are likely to apply to visitor studies research and evaluation in museums. Keep in mind that all exemption categories describe research practices that pose no more than minimal risk to research participants. The exemption categories aim to identify what about the research process makes it pose no more than minimal risk to research participants.

Exemption Category 2

Are you collecting surveys, interviews, or observations of public behavior?

If yes, your study may be exempt under Exemption Category 2. The study is exempt if the identity of the human subjects participating (the people you are collecting data from) cannot be readily ascertained (e.g., anonymous data, or data delinked from identifiers like names or email addresses, and kept confidential); OR if the identity of human subjects is disclosed, the disclosure does not have detrimental consequences or potential risks.

Examples of exempt research in this category:

- An anonymous survey of a random sample of adult visitors

- Interviews with teachers about their program experience

- Unobtrusive observations of families at the museum

For research with children, observations of public behaviors can be exempt under this category (i.e., observations of families at the museum). However, surveys or interviews with children are not exempt under this category because any interactions with children are subject to greater oversight.

Exemption Category 3

Are you collecting study information involving a benign behavioral intervention (e.g., changing noise in exhibitions) OR using audiovisual recording?

If yes, the study may be exempt under Exemption Category 3 if the human subject consents. Plus, as in Exemption Category 2, the study is exempt if you can’t easily identify participants OR disclosure of participants’ identity does not have detrimental consequences or potential risks.

Examples of exempt research in this category:

- An experimental study where adult visitors consent to experience an interactive with ambient noise and without noise (e.g., benign interview)

- Audio-recorded interviews with teachers about their program experience (with their consent)

- Adult visitors video recording their visit to an exhibition after consenting to participate in the study.

No research with children is exempt under this category.

Exemption Category 4

Are you analyzing old data sets that other researchers collected?

If yes, the study may be exempt under Exemption Category 4. Private information that is publicly available, or that was recorded in a way that the subjects can no longer be identified, is considered “secondary use.” Research with secondary use data is usually exempt from IRB review.

Examples of exempt research in this category:

- Analyzing publicly available open access data

- Conducting analysis on data previously collected by another researcher (who had IRB permission); you are provided data delinked from identifiers

Exemptions NOT applicable to visitor studies research and evaluation

Exemption Category 1

It may be tempting to say visitor studies research is exempt under Exemption Category 1. This category describes research in commonly accepted educational settings, involving normal educational practices. While museums might consider themselves to be educational settings, research within museum settings are not exempt under this category because they are not considered “commonly accepted educational settings.”

Still feeling lost?

Do you still feel confused as to whether you need IRB review or not? In my next post, I will share a decision tree that distills this information even further.

The post IRB 101: What types of human subjects research are exempt from IRB? appeared first on RK&A.

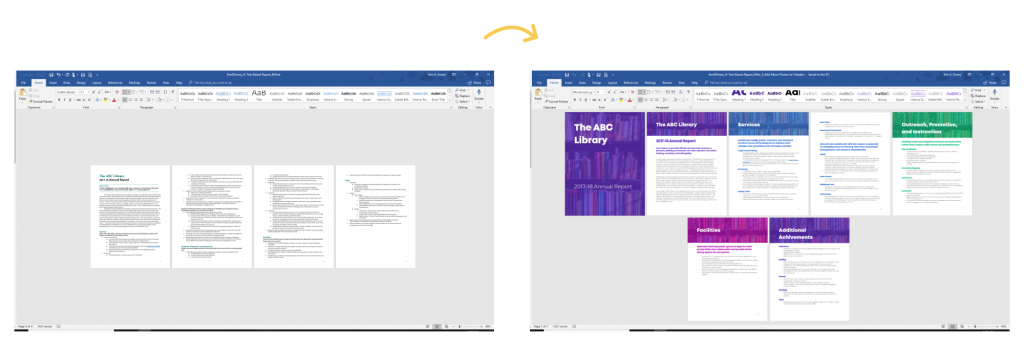

10 Tips for Redesigning Reports

2011 called.

It wants its 100-page reports back.

My wish: Limit yourself to just 30 pages (or less!).

It wants its portrait reports back.

Are people printing your doc… or reading it from their (landscape) computer?

It wants its text-heavy reports back.

We need visuals on every single page.

Ready to revamp your technical reports?

10 Tips for Redesigning Reports

Here are 10 quick wins to improve your next text-heavy document.

You don’t need to apply all 10.

Even one of these techniques will make dense reports more readable for our non-technical and busy audiences.

Design a One-Pager

The 30-3-1 Approach is the bare minimum for designing reports that actually inform decisions. You can read more about 30-3-1 here.

When you’re creating one-pagers, don’t forget to add at least ½ inch of white space between each graph so the page doesn’t feel smushed.

It’s tempting to try and fit everything into a one-pager. A one-pager is just the highlights; the full report can go into more detail.

Use Brand Colors and Fonts

Never, ever, ever use your software program’s defaults.

I’m looking at you, Calibri.

If you’re using Microsoft Office programs, like Excel, Word, or PowerPoint, then Theme Colors and Theme Fonts can save you hours of time.

Start with the “So What?”

The Key Findings and Next Steps deserve to be shared earlier (not buried in the last few pages of our docs).

Use Landscape Orientation

Will you pay to print your reports and mail them to your recipients?

If not, they’ll probably read in on their (landscape) computer screen.

Add a Cover

We can make beautiful, engaging report covers in 20 minutes or less— inside software we already have.

Here’s one of my favorite before/after transformations from Sara DeLong:

Chunk with Dividers

Begin each chapter with a dark, visually-striking divider page to help break up the content into small bites.

Add 1+ Visual Per Page

Think of a recent report: how many pages had visuals?

The Text Wall takes too long to read.

Add a Variety of Visuals

Not just tables.

Humanize reports with photos, too.

You can grab my Checklist of 15 Types of Visuals from this podcast with Alli Torban.

Go Beyond the Bar Chart

My old reports: bars, clustered bars, stacked bars and columns charts.

Zzzzzzzzzzzzz…

Lower the Reading Level

I suggest writing two levels below (e.g., a Master’s degree audience would get Grade 9-12 writing).

Your Turn

Which tip will you apply to your next technical report? Comment anytime and let me know.

A Sleep-Deprived perspective on Data Tracking

How can we incorporate diversity, equity and inclusion in evaluation

In the past couple of years, there has been an increasing focus on diversity, equity, and inclusion (DEI) in evaluation. More and more practitioners are grounding their work in equity and providing guidance to other evaluators, including the Canadian Evaluation Society. Recognizing that equitable evaluation is an emerging area of work, this article aims to add to the growing discussion. While it does not include an exhaustive list of issues and strategies, it will help you introduce some changes to your evaluation practice.

Grounding an evaluation in DEI means the evaluation is equity-focused, culturally responsive, and participatory. In addition, such evaluation examines structural and systemic barriers that create and sustain oppression.

Inclusive and equitable evaluation requires continuous unlearning of old practices and learning of new ones. Questioning, practicing, and reflecting are also important in DEI. To bolster equity efforts, re-examine current practices and paradigms, since historically and presently, well-intentioned evaluation practices have at times dragged equity efforts, and in some cases, reinforced inequalities.

Adopting DEI in evaluation starts with the organization. The implementation of DEI in the workplace is an integral step towards implementing an inclusive and equitable evaluation. In fact, it is challenging to implement equitable evaluation without organizational adoption and buy-in, as it requires explicit leadership support and the right organizational setting. DEI is not a quick fix; rather it is a continuous commitment to achieve equitable results.

Adoption of DEI in an organization may include the following:

-

An explicit DEI strategy and performance measurement plans;

-

DEI systems embedded in the culture and practiced consistently;

-

Visible commitment and accountability from leadership in incorporating DEI in decision making;

-

DEI practiced in recruitment and career advancement; and

-

Continuously evaluating DEI efforts, collecting data on DEI indicators, and adopting changes.

Adoption of DEI in evaluation starts at the evaluation initiation.

1. Evaluation Planning

Context

Aim to understand the community and the system to successfully engage and partner with communities/program recipients. Learning about the social, cultural, historical, and political context is critical to implement values-based and culturally relevant evaluation that promotes equity and justice. In addition, identify whose voice has been silenced, whose voice is seen as the “truth” and aim to understand the power dynamics driving the current reality.

For example, to effectively evaluate a program focusing on public safety in Canada or USA, the evaluator needs to understand the current and historical violence and mistreatment of Black and Indigenous people by police and the legal system.

Similarly, to evaluate vaccine hesitancy and/or resistance in Black and Indigenous communities, it is imperative to understand the widespread racism in medical research and medical care. Many in Black and Indigenous communities distrust medical professionals and the government, as they historically faced structural and systemic challenges in accessing services or being excluded from supports.

Team selection

Select a strong evaluation team with a good mix of skills, experience, knowledge, and perspectives. If possible, aim to have representation from the community that is being evaluated, and balance diversity in the team by considering gender, ethnicity, content knowledge, methodology expertise, and expertise and knowledge of participatory research and equity focused evaluation.

Stakeholders

Include key stakeholders in the evaluation. Stakeholders make the important evaluation decisions, starting from identifying which evaluation questions get asked, to methodology all the way to reporting. Intentionally and carefully select stakeholders to include in evaluation committees and evaluation activities other than data collection.

Historically, individuals from marginalized communities have not had a chance to participate in evaluation beyond providing data. Involve community members as stakeholders, co-creators, and collaborators and provide appropriate compensation. In addition, determine the degree of stakeholder participation, and clearly identify stakeholder roles (e.g., participate, consult, or make decisions). In some cases, engage stakeholders separately if there are power dynamics that cannot be resolved.

Evaluation Questions

Evaluation questions are the backbone of an evaluation, as all efforts are focused on addressing these questions. As the driver of the evaluation, include evaluation questions relevant to all stakeholders, including and especially those coming from the community. It is a common practice to focus and prioritize evaluation questions from program funders or leaders; however, such practice does not examine the program recipients’ values, and systemic and structural causes of problems in the community. It is likely that evaluations that only focus on the funder’s questions perpetuate the cycle of inequity and fail to address the root causes of the problem that program is attempting to address. (See our article for more tips on writing evaluation questions.)

Evaluation design

In the past, quantitative data has been viewed as more rigorous and accurate, however both quantitative and qualitative data have value in DEI evaluation. A mixed methods approach is ideal for equitable evaluation as it combines the strengths of quantitative and qualitative data, i.e., precise estimates, statistical differences, and breakdown into sub-groups with detailed descriptions, lived experiences and complex narratives. Although mixed methods is preferred, be sure to select an evaluation design that is suitable for the program, and context.

2. Data collection and analysis

Culturally appropriate methodology

Design the data collection approach to respect and fit the communities’ traditions, norms, and standards – of course, best practices in evaluation still should be applied.

Minimize bias

Bias is inevitable, however considerable efforts should be made to reduce all kinds of bias in data collection and analysis. Bias can occur in data collection and analysis, such as when surveys ask leading questions, or certain populations are over- or under- represented and the analysis failed to account for this situation. Make efforts to identify potential biases and strategies to address them, prior to and during the data collection and analysis stages.

Balance inclusivity and burden

Aim for adequate representation of marginalized communities in data collection, while considering the burden on the participants. Individuals from the community can provide valuable information; however, such benefits must be weighed against the harm done to individuals if the evaluation data collection efforts pose a significant burden.

Often, individuals from marginalized communities have served – and continue to serve – as a data source for evaluation and applied research purposes, with minimal benefit to the community. For example, the Downtown Eastside in Vancouver is home to the most marginalized and transient populations in Canada with high incidences of mental illness, substance use, communicable diseases, homelessness, and crime. The area has been a subject of extensive evaluation and research, serving as a data source for considerable literature published on peer-reviewed journals, reports, and dissertations. Although the evaluation findings from this area have influenced policies and practices locally and internationally (e.g., the use of safe consumption sites), the main problems and burden of disease observed in the area persist.

Identity-based data

When collecting identity-based data such as ethnicity and gender, examine the utility, benefit, and relevance of the data to promote the well-being and rights of community members. Collecting identity-based data consistently from marginalized communities can demonstrate inequity in the system. For example, in the US, analyzing the burden of COVID-19 disease by ethnicity/race showed that Indigenous (2.4x), black (2.0x), and Hispanic/Latino (2.3x) communities were more likely to be infected, hospitalized and die from COV-19 as compared to white, non-Hispanic communities.

To promote equity and inclusion, use the data appropriately and safeguard it to ensure confidentiality and security. In addition,

-

ensure data quality to prevent further harm to marginalized communities;

-

collect data that examine and reflect structural disadvantages, root causes and/or discrimination; and

-

ensure that the evaluation team members involved in the collection, use, analysis and reporting of identity-based data are familiar with and adhere to privacy policy and legislation.

3. Reporting

Before reporting

Prior to formally documenting the evaluation results in a report, discuss the main evaluation findings with stakeholders. These early conversations show all stakeholders how their contributions were used and provide them with the chance to correct any inaccuracies and to clarify any misrepresentations. The selection of participants in this activity should refer to the stakeholder mapping identified at the planning stage, paying special attention to members of marginalised communities, who are often left out of discussions due to multiple kinds of constraints. Facilitate these discussions in an inclusive manner, by providing adequate time and space for reflection and meaningful participation.

Reporting

A good evaluation report will ensure the data is duly captured with balanced perspectives and fair representation of different points of view. The evaluation report is the most important evaluation deliverable, so methodology, limitations and findings need to be described in detail. When possible, the report should identify root causes and systemic and structural barriers.

In addition, ensure that the evaluation report

-

uses language and terms that are suitable for the community (e.g., the use of pronouns);

-

uses images intentionally and critically to ensure that images do not perpetuate stigma (e.g., using images of homeless people when working on substance abuse program evaluation); and

-

visualization is appropriate and culturally relevant (e.g., the use of red dots on a city map to show service recipients can make individuals feel like they are problem or burden for their community).

Overall, DEI in evaluation is a commitment to question our current standard of practice and continually reflect on our work to enhance programs, services, and systems for all, including marginalized and worst-off communities.

Check out our Program Evaluation Standards article and resource.

Sign up for our newsletter

We’ll let you know about our new content, and curate the best new evaluation resources from around the web!

We respect your privacy.

Thank you!

Sources:

-

Dean-Coffey, J., Casey, J., & Caldwell, L. D. (2014). Raising the Bar – Integrating Cultural Competence and Equity: Equitable Evaluation. The Foundation Review, 6(2). https://doi.org/10.9707/1944-5660.1203