You know the story of Goldilocks and the three bears?

Little girl breaks into the house of three bears while they’re out for a walk. Then proceeds to eat their food, break one of their chairs, and eventually falls asleep in one of their beds.

The story came to mind when I was thinking about modern reporting challenges. Which I know makes absolutely no sense at all, but I stopped trying to understand my mind years ago. But I digress.

I think there is a lot we can get from the story that we can apply to how we report. Just not in an obvious way.

Goldilocks and the Three Reports

One day Goldilocks was talking to her organization’s evaluator about a few of the reports set to go out to their stakeholders.

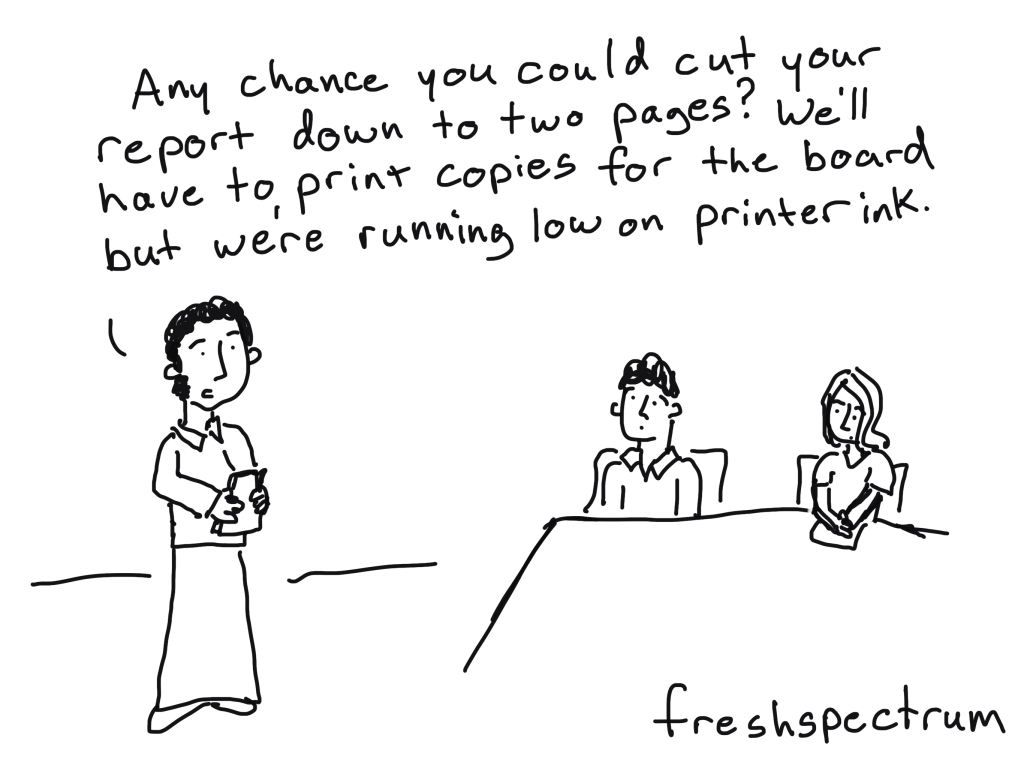

According to Goldilocks, the first report, the one designed for the large bear, was too long. It also had too few pictures and charts.

The second report, the one designed for the medium bear, was too short. This one had too many pictures and charts given the length.

Now the third report, the one designed for the little bear, was just right. Goldilocks loved everything about this report.

And because Goldilocks was the evaluator’s direct supervisor, she instructed the evaluator trash the too long report and the too short report. Because the “just right” report is the best of the bunch and why should the organization share anything that’s not the best?

So what’s the problem?

The “just right” report is only “just right” for Goldilocks (who is not the target audience) and for one of the three bears (who are part of the target audience).

By picking just the one report, she excluded 67% of the target audience. Not because the other reports didn’t work, but because the other reports didn’t match her vision of a good report.

Unfortunately this happens all the time.

We often design reports for just a small portion of our audience. And the reports that get the green light are the ones preferred by those with authority.

What to do instead.

The simple answer. Create and share all three reports. Actually, create more reports than that if you can.

Stop assuming that one report can do it all.

Want to learn how to approach reporting in a modern kind of way?

Join me for a free webinar on July 18 at 3PM Eastern.

Click the image below to learn more and register.