If I see one well-intended mistake my customers make over and over again, it’s that they confuse “doing data” — having a flurry of data-related activities — with BEING data driven. The organizations that first take the time to address the culture — or being — part of the process are the ones that ultimately see the biggest shift towards a culture that thrives with open, challenging conversations with data.

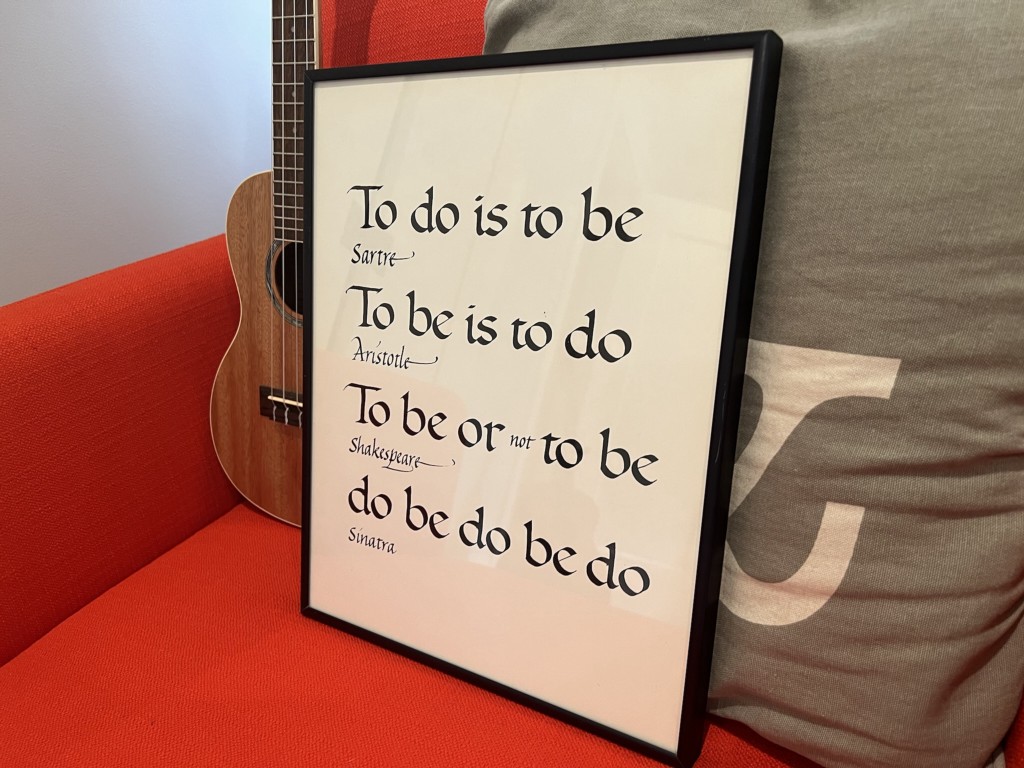

To be or to do?

First, let’s get clear on the difference between doing and being.

Doing involves actions: discrete, tangible, work. In a sense, it is product-focused.

Being involves thoughts and beliefs: everything underneath how we operate.

My clients find doing/actions easier to describe, measure and achieve. Who doesn’t love a good checklist? And while they initially find being/beliefs harder to see and describe, but they ultimately feel far more capable of navigating organizational and workflow shifts once they dig into to the being.

Fellow coach Alex Carabi describes this dichotomy like an iceberg — often leaders/managers instinctively focus on the doing, the part they can easily see above the water; but those changes are only temporary. The real change, the kind of change that dictates whether an organization will fail or succeed, is far below the water line in the space where the collective beliefs of the organization are held.

Debunking your excuses for not being data driven

I hear a lot of reasons excuses for why organizations struggle with being data driven. Let’s do a quick reality check so we can talk more about why being data driven is about your organizational health.

· “We need better tools.” I know some amazing organizations that use Microsoft Excel or Google Sheets as their platform. They have cultivated a desire to create and use information from them, which is far more powerful than the tool itself.

· “I am not a data person.” We are all data people when the data is meaningful: when most of us prepare for a trip away from home, we check the weather report and pack accordingly. All of us can use a dashboard and make strategic decisions with it, and weather reports are dashboards representing complex data and analysis. This mindset shift is crucial: we are all data people; your organization already “does” data. You have to decide collectively what data will be meaningful to your work and commit to using it as fundamental to your organization’s health.

· “We have too many competing priorities” or “We don’t have time for this.” What self-limiting narrative are you telling yourself to restrict information that would help your organization serve people better? What is getting in between you and greatness? What if data was a crucial mineral in the water that nourishes your organization?

· “We have a solid command of our data and who we serve.” Often this is a saboteur or guard rail against letting information into the system that would challenge preconceived notions, inhibit willingness to innovate, and/or mask a desire to confront information that something you do isn’t working. Your experience of your clients may be supported by the data, but more often you are missing part of the story. And why wouldn’t you want the whole picture?

DOING data-driven relies on actions,

BEING data-driven requires culture change

Being and doing are partners. If you want to create a more data-driven culture you won’t treat them as either/or. Many organizations focus on improvements at the doing part — such as building logic models and dashboards — but then relegate those tools to a specific person or department who may not have the influence to ensure that the data gets used when critical decisions are made.

BEING data driven requires that the constellation of people recognize that data… and work together to integrate it into the organization’s culture and all its decision-making.

If your organizational system was described like your health, you couldn’t define it by any one organ or health metric. Rather, health requires each of those things to work both independently and as part of the greater whole.

And the hardest part: you already knew this

Systems-level work isn’t particularly new or even revolutionary. You know some flavor of it by another framework: team building has been around for ages, and efforts around diversity, equity, inclusion and belonging are deeply rooted in systemic efforts.

When we sit down and piece through where your organization struggles, maybe do an elucidating Five Whys exercise, you might exhale with a knowing sigh of someone who was trying to fix a symptom of a deeper underlying problem. But that’s also the good news. Once you bring these issues to light, you can do something about them. And get on with the power of BEING data driven.