Data Placemats & Emergent Learning Tables: Tools to Meaningfully Engage Diverse Perspectives in Evaluation Sensemaking

In an earlier post that highlights Reflections on the Intersection of Evaluation and Emergent Learning, I shared insights generated through my application of Emergent Learning principles and tools to my work. Through this experience I came to realize that the true intersection between evaluation and emergent learning lies in the interpretation of data and its use for reflection and learning. Data interpretation is a key part of the evaluation life cycle. It’s the space where we start to make meaning of and draw conclusions about the data collected through the evaluation. Often, this data is an accounting of the experiences and observations of community members and other stakeholders who aren’t typically involved in the sensemaking conversation.

I believe that involving different stakeholders in the interpretation process, those most connected to the programs, initiatives, and systems we are evaluating, invites a diversity of perspectives that can strengthen insights and lead to new ways of moving forward.

This post focuses on using data placemats within the context of emergent learning, as a vehicle to meaningfully involve stakeholders in the sensemaking process (to learn more about data placemats, check out this article).

The Data Interpretation Meeting

As part of the multi-site health equity initiative that Innovation Network is evaluating, our evaluation team conducted approximately 60 interviews with participating community members. As we analyzed and reflected on the data, I realized that we were only capturing a part of the story…

To truly understand and leverage the data into meaningful action, we would need to involve those that are connected to the communities and the communities themselves in the sensemaking process.

While I often involve the client and relevant stakeholders in the interpretation of data, I usually do a fair amount of data interpretation in advance and feel pressure to come up with insightful findings and recommendations of my own. For this evaluation, I scheduled a full-day, in-person data interpretation meeting with foundation staff and members of the technical assistance/coaching team.

The purpose of this meeting was to use the emergent learning table as a means to share evaluation data, create space to add additional observations and experiences, and collaboratively engage in conversation to generate meaningful insights and new ideas for moving the work forward.

Through this conversation, I wanted to gather the experiences, interpretations, and reflections of the individuals that were working closely with the communities.

The Emergent Learning Table

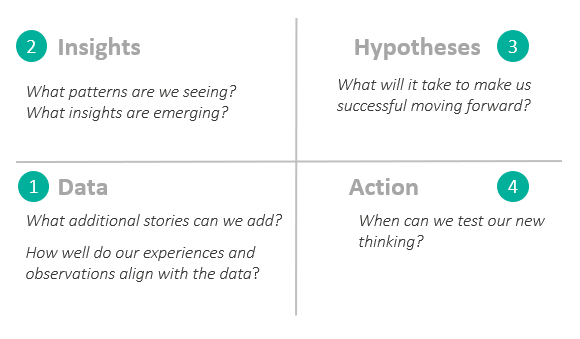

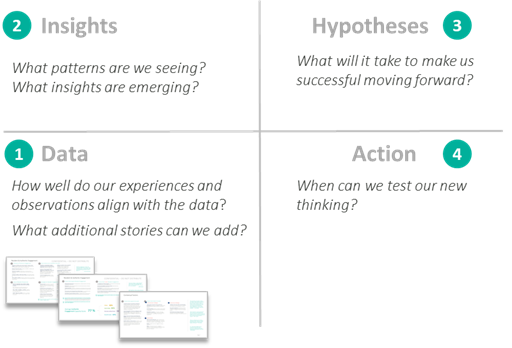

The emergent learning table helps groups articulate their best collective thinking about what it will take to be successful by providing a platform to engage stakeholders in a process designed to 1) reflect on data, 2) generate insights, 3) establish hypotheses, and 4) move towards action. Through this platform, conversation is organized into four quadrants:

- Quadrant 1: Ground Truth/Data (discussion of experiences and stories)

- Quadrant 2: Insights (an opportunity to collaboratively reflect on the data to highlight patterns and generate insights)

- Quadrant 3: · Hypotheses (an opportunity to generate new ideas for moving forward)

- Quadrant 4: Moving to action (making a plan to test new hypotheses)

For more information on emergent learning tables, checkout Fourth Quadrant’s Guide to Emergent Learning Tables.

While I was initially apprehensive about using a new approach for the interpretation process, I was also excited about the possibilities it could create. Our team had lots of data to share and we would be engaging with a group of people that were truly involved and invested in the outcomes of this initiative. What better group of people to engage in this conversation!

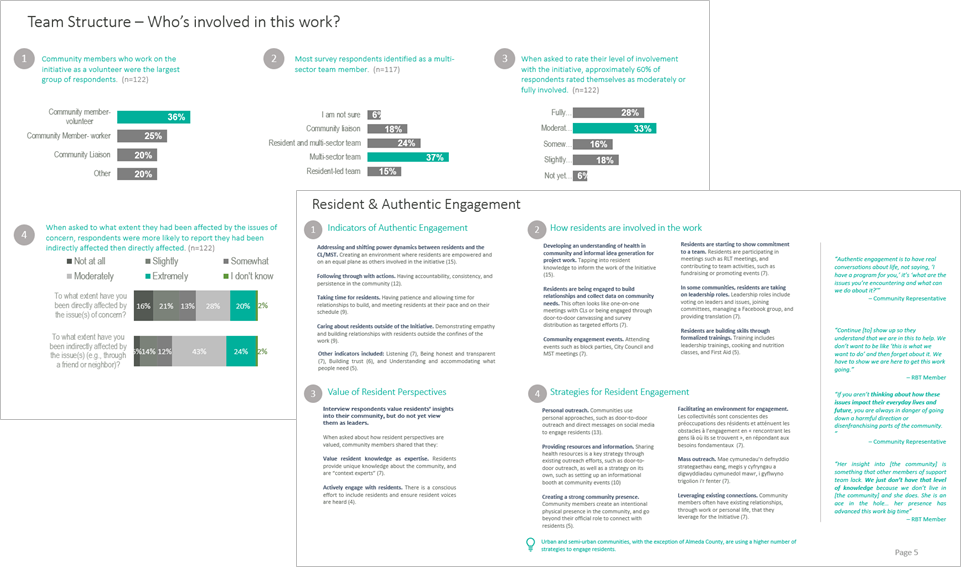

Through interviews and surveys, our evaluation team collected a large amount of data. The interviews in particular yielded some great qualitative data.

How could we share this information in a way that could facilitate the sense-making process so participants can readily comprehend the data and surface insights?

Data Placemats

I often use data placemats as a way to organize the evaluation data into topic areas to help meeting participants understand and make sense of data.

The visual nature of the data placemat makes it easier for participants to digest information, enabling them to more readily engage in productive conversation around the data.

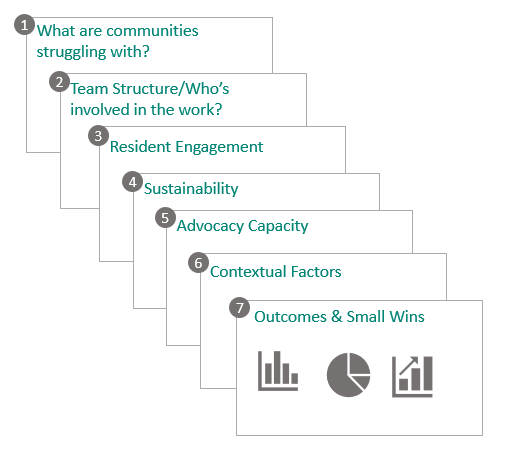

For this meeting, we used a combination of data placemats and an emergent learning table to facilitate the data interpretation meeting. We created a total of seven data placemats, each focusing on a specific thematic area that emerged from the evaluation team’s preliminary analysis:

Each placemat contained a combination of interview quotes organized around sub themes and supporting charts and graphs. The placemats were used as a vehicle to discuss data during the Quadrant 1 conversation.

Using data placemats to help participants understand and make sense of the data allowed us to facilitate a conversation that flowed from data to insights to hypotheses to action.

What did this make possible?

Moving to Action. Using the Emergent Learning Table as a platform for data interpretation made it possible for the group to go beyond insights to action. This is where evaluation often falls short. The collaborative nature of the conversation and involvement of multiple stakeholders helped increase buy-in to the hypotheses that were generated. This buy-in helps ensure hypotheses are followed through and tested.

Renewed sense of purpose. Going through the quadrants together gave folks in the room a renewed sense of purpose. While many of the insights generated involved issues associated with structural racism and the devastating impacts of white privilege, the group did not feel deflated. Rather, the group worked together to identify existing barriers and brainstorm new ways of moving forward. Coalescing around a common goal/framing question helped inspire a commitment to reflection and ongoing learning.

Creating space for transformation. All too often, grantees and community members experience evaluation as transactional and one-sided and are viewed primarily as subjects of data collection. For evaluation to be truly reflective of the of the experiences and learnings that are emerging from the ground, we must treat recipients of program services (e.g. grantees and community members) as learning partners not just as individuals called upon for data collection. Inviting the subjects of data collection to the sensemaking table as experts in their own lived experience along with other forms of knowledge and expertise, creates the possibility for transformation through collaborative conversation grounded in data and experience.

What did we learn?

Power of collaborative conversation. Inviting a diverse group to the table helped leverage the knowledge and experience of each person and added depth to the conversation. Each person that participated in the data interpretation meeting had their own experiences, observations, and insights to contribute. This was especially pronounced when different technical assistance providers reflected on their experiences working with their designated communities. By having multiple perspectives at the table, we could learn from each other’s experience and work together to identify emerging patterns in the data and the stories that were being shared.

Engaging a diversity of voices. The emergent learning table discussion involved foundation staff, community coaches, and technical assistance providers. The diversity of perspectives led to more meaningful conversation captured by the stories and experiences of those in the room and helped generate new hypotheses grounded in experience.

Moving forward, I would like to broaden the table by inviting community members into the sensemaking conversation to gather their perspectives on this work. By engaging a diversity of perspectives in these conversations, we will be able to leverage the experiences and knowledge of individuals across a broader cross-section of the community and work towards developing a more holistic understanding of what it takes to advance health equity in communities.

The emergent learning table demonstrated the power of incorporating real-time, collaborative reflection in the evaluation sense-making process. Data placemats offered a way to share data collected through the evaluation to help participants digest large amounts of information to meaningfully engage in collaborative sensemaking and idea generation.

Data Placemats & Emergent Learning Tables was originally published in InnovationNetwork on Medium, where people are continuing the conversation by highlighting and responding to this story.