This article is rated as:

In our previous article, Interpreting themes from qualitative data: thematic analysis, we introduced the concept of thematic analysis, provided you with a 5-step process to complete your analysis, and highlighted some common challenges with thematic analysis.

In this follow-up article, we explore how to present your themes effectively. Whether you’re developing a comprehensive final report, a concise summary report, an eye-catching infographic, or a presentation, how you present your themes can significantly affect the clarity and impact of your findings.

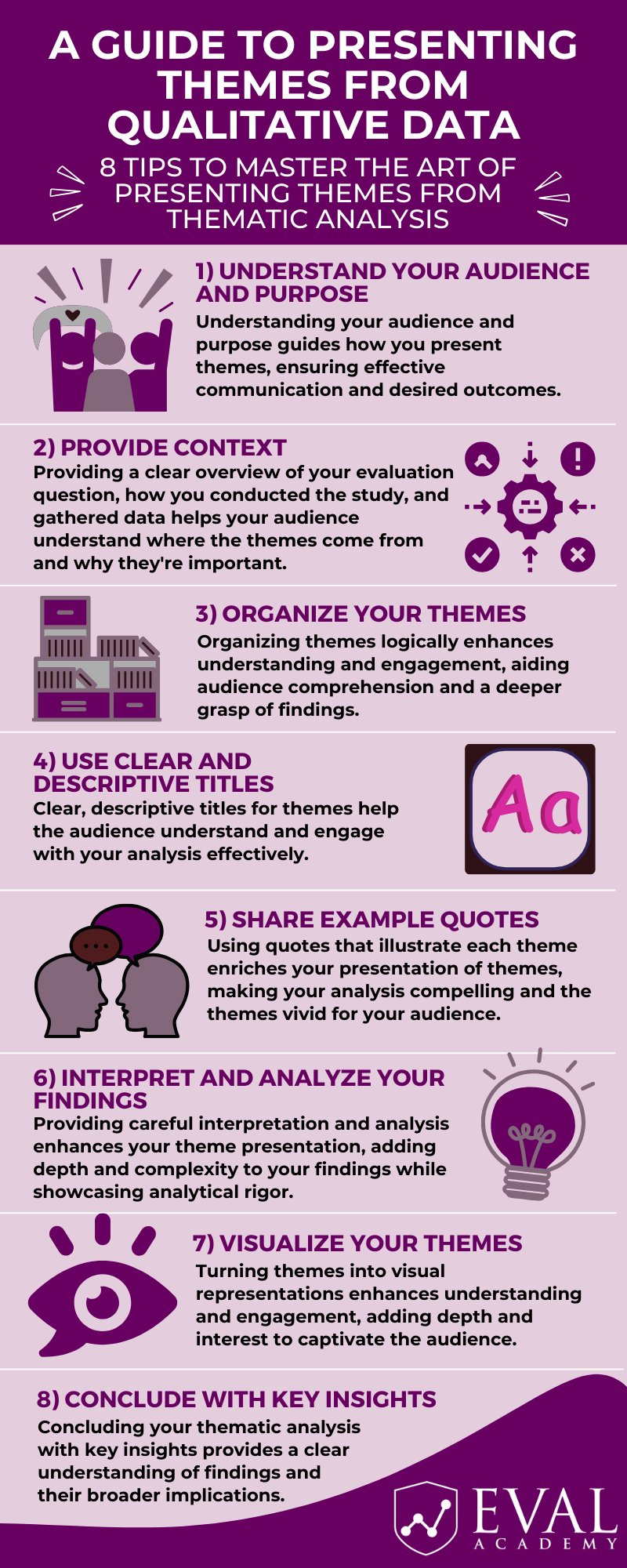

Here are our 8 top tips to master the art of presenting themes from thematic analysis. Don’t forget to download our free infographic here!

Step 1: Understand Your Audience and Purpose

The first and arguably most important step in presenting your themes is understanding your audience and purpose. Before delving into the specifics of presenting your themes, it’s essential to consider who will be consuming this information and why.

Consider Your Audience:

-

Familiarity: Assess the understanding and experience of your audience with evaluation and qualitative analysis techniques. Do they possess a strong understanding of these concepts, or do they have differing levels of experience?

-

Audience Engagement and Relevance: Consider the diverse interests, engagement levels, and specific needs of your audience. You will need to tailor your presentation of themes and content to both captivate and resonate with their interests while ensuring that your analysis directly addresses their concerns and contributes meaningfully to their decision-making processes.

Clarify the Purpose:

-

Desired Outcome: Determine what you aim to achieve with your thematic analysis. Are you seeking to inform, persuade, inspire action, or spark discussion?

-

Fulfilling Evaluation Questions and Objectives: Align your analysis with the broader objectives of your evaluation. How do your themes contribute to answering your evaluation questions or addressing your evaluation aims?

By taking the time to understand your audience and purpose, you can tailor your presentation of themes to effectively communicate your findings and achieve your desired outcomes. This foundational step sets the stage for the rest of your theme summary, guiding subsequent decisions regarding content, format, and delivery methods.

Now it’s time to move forward with writing and presenting the themes!

Step 2: Provide Context

Providing context is essential for ensuring that your audience fully comprehends the significance and relevance of the themes you present.

Provide an Overview of the Evaluation Questions:

-

Clearly State your Evaluation Questions: Articulate your evaluation questions or objectives. This establishes the foundation for your thematic analysis and provides clarity to your audience regarding the purpose of your evaluation.

-

Highlight the Significance: Explain the importance of your evaluation question and the broader implications of addressing it. This contextualizes your themes within the project or program being evaluated, aiding your audience in understanding the relevance and significance of your findings.

Provide an Overview of the Methodology:

-

Outline Your Methodological Approach: Describe the methodology employed for conducting the thematic analysis, including the qualitative data collection methods utilized, such as interviews, focus groups, or document analysis.

-

Explain Your Analytical Process: Provide an overview of the steps undertaken to analyze the data and derive themes. Highlight any specific analytical techniques, whether inductive or deductive, and mention any software utilized for analysis.

-

Address Rigor and Trustworthiness: Discuss the measures taken to ensure the rigor and trustworthiness of the thematic analysis, such as inter-coder reliability checks, member checking, or reflexivity.

-

Discuss Data Saturation: If applicable, mention whether data saturation was achieved and its impact on the thematic analysis process.

Provide Supplementary Materials:

-

Include Appendices: Consider including supplementary materials, such as a copy of your codebook or a list of related themes, in an appendix of your report. This allows interested readers to delve deeper into the analytical details of your thematic analysis and enhances transparency and accessibility.

Offering a thorough overview of your evaluation question, methodology, and data collection process establishes a strong foundation for presenting your themes. This context helps your audience know the origin and importance of the identified themes which can enhance their understanding of your evaluation findings.

Step 3: Organize Your Themes

Themes within a thematic analysis are often presented in a narrative format. Organizing these themes is crucial to ensure a smooth flow in your narrative, making it easy for your audience to follow. This step is important in guiding your audience through the logical progression of your analysis, resulting in a coherent and understandable presentation of your findings.

Group Related Themes:

-

Identify Common Threads: Review your themes to identify any commonalities or relationships. Group themes that share similar characteristics or are conceptually related.

-

Create Theme Clusters: Consider clustering related themes together to form overarching categories or sub-themes. This structured approach provides clarity and coherence to your presentation.

Establish a Logical Flow:

-

Introduction and Overview: Begin by introducing the overarching themes and providing an overview of what will be covered. This sets the stage for a deeper exploration of each theme.

I see three main ways to structure your themes and analysis:

1) Alignment with Evaluation Questions: This method focuses on organizing themes according to the specific evaluation questions they address, ensuring direct alignment with the objectives of the evaluation. It provides a targeted approach to presenting themes that directly respond to the research questions or outcomes.

2) Sequential Presentation: This method involves organizing themes in a sequence that mirrors the progression of the evaluation or analytical journey. It presents themes in the order they were identified or developed during the analysis, providing a logical flow that reflects the process of exploration and discovery. Sequential presentation focuses on the order of identification or development as well as the weight of the themes and their prominence i.e., those that were the most prominent are discussed first.

3) Chronological Order: This method entails presenting themes in chronological order to reflect the sequence of events captured in the data. It highlights temporal patterns or changes over time, offering insights into the evolution of themes within the context of the evaluated phenomenon. Chronological order emphasizes the temporal sequence of events.

When structuring themes and analysis for evaluation, it’s essential to consider factors such as the nature of the data, evaluation objectives, and audience preferences. For advisory groups or those deeply involved in the evaluation, a detailed approach focused on the alignment with the evaluation questions may be the most appropriate. While decision-makers may favour upfront, concise insights outlined through sequential presentation. Operational teams may benefit from practical, actionable recommendations tailored to their daily tasks as presented in chronological order. By aligning the presentation format with audience needs, clarity and relevance are maximized, enhancing the impact of evaluation findings.

-

Transition between Themes: Make sure your themes flow smoothly by using clear signals or phrases to transition between them. This keeps your analysis easy to follow and helps your audience stay engaged.

Logically organizing your themes helps people understand and stay interested. When your presentation is well-organized, it’s easier for your audience to absorb your analysis and get a deeper grasp of your findings.

Step 4: Use Clear and Descriptive Titles

Crafting clear and descriptive titles for your themes is essential for effectively communicating the focus and essence of each theme to your audience.

Craft Effective Theme Titles:

-

Capture the Main Idea: Ensure that each theme title succinctly captures the main idea or concept it represents. Aim to condense the essence of the theme into a few words or a brief phrase. Clear and descriptive titles serve as signposts that orient your audience and provide insight into the content that follows.

-

Grab their Attention: Create captivating titles that grab your audience’s attention and make them curious. Well-chosen titles can draw people in and encourage them to learn more about the themes discussed in your presentation.

-

Avoid Ambiguity and Complexity: Choose words and phrases that are clear, straightforward, and easily understood by your audience. Avoid jargon, technical terminology, or unclear language that may obscure the meaning of the theme. Keep it simple to make sure your message is clear.

By using clear and descriptive titles for your themes, you make your analysis easier to understand and more impactful. These titles act as a roadmap, helping your audience navigate through your thematic analysis and ensuring that your main points are communicated clearly. For example: “Understanding Engagement Levels: In-person programming supports higher engagement compared to online”.

Step 5: Share Example Quotes

Using real quotes from your qualitative data makes your themes more vivid and enhances how you present your findings. As we discussed in Interpreting themes from qualitative data: thematic analysis, you can set up a special “example quote” feature in your data analysis software to quickly highlight quotes that represent each theme, enabling easy retrieval while you write up your narrative. This can help to save time, streamline the quote selection process, and eliminate the need to re-read data excerpts later on.

Bring Themes to Life:

-

Select Meaningful Quotes: Pick quotes that clearly show what each theme is about. Choose quotes that capture the main ideas, emotions, or experiences related to the theme. These quotes should connect with your audience and help explain your analysis clearly.

Represent Diversity:

-

Ensure Diverse Perspectives: Ensure that the quotes you select represent a diverse range of perspectives, voices, and experiences within each theme. This inclusivity adds richness and depth to your narrative.

-

Use a Variety of Sources: Draw quotes from various sources within your data, such as different interviewees, focus group participants, or document excerpts. This variety showcases the breadth of your analysis and reinforces the credibility of your findings.

-

Ensure Ethical Processes: Ensure that any ethical processes, such as removing identifiable features from quotes, have been followed. In projects with small populations, consider removing any unique turns of phrase or colloquialisms that could potentially identify the speaker.

Using quotes that illustrate each theme enriches your presentation of qualitative findings and helps your audience better understand your analysis. These carefully chosen quotes act as strong evidence, bringing your themes to life, and making your analysis more compelling.

Step 6: Interpret and Analyze Your Findings

Providing a thorough interpretation and analysis for each theme is crucial for enhancing the depth and impact of your thematic analysis.

Explain the Significance of the Theme:

-

Highlight Relevance: Emphasize how the theme contributes to the overall narrative of the findings. Discuss how each theme sheds light on key aspects of the program or intervention under investigation.

Explore Implications:

-

Discuss Practical Applications: Explain how insights from the theme can inform program directions or decision-making processes.

Demonstrate Analytical Rigor:

-

Demonstrate Depth of Analysis: Demonstrate the depth of your analytical engagement with the theme by going beyond surface-level description. Provide nuanced insights and interpretations that reflect your deep understanding of the data.

-

Reflect Critically: Critically reflect on the themes you’ve presented, recognizing any limitations or biases in your analysis. Discuss different ways to interpret the data or conflicting viewpoints, showing your dedication to thorough analysis and self-awareness.

Tell a Coherent Story:

-

Ensure Your Story Flows Smoothly: Make sure that your interpretation and analysis fit together smoothly to tell a clear story with your data. Blend themes, interpretations, and evidence seamlessly to create a persuasive and engaging narrative.

Connect to Existing Literature:

-

Integrate Existing Literature: If applicable, situate the theme within the existing body of literature on the topic.

-

Align with Relevant Theoretical Frameworks: Consider how the theme aligns with theoretical frameworks or conceptual models underpinning your evaluation if applicable.

By offering thoughtful interpretation and analysis, you enrich the presentation of your themes, adding depth and complexity to your findings and demonstrating analytical rigor.

Step 7: Visualize Your Themes

Visualizing your themes through charts, graphs, or thematic maps can significantly enhance the clarity and impact of your presentation of themes. Visualizations provide an alternative mode of understanding that complements textual descriptions. Incorporating visual elements can increase engagement and retention of information. Take a look at our article: 3 Easy Ways to Quantify Your Qualitative Data.

Choose Appropriate Visual Formats:

-

Select Visual Aids: Choose visual formats that are suitable for representing the nature of your qualitative data and the relationships between themes. Options include bar charts, line graphs, pie charts, scatterplots, or thematic maps (see our previous article for an example).

-

Match Complexity: Match the complexity of your data and analysis with the appropriate level of detail in your visualizations. Simple visualizations may suffice for straightforward themes, while more complex themes may require more elaborate representations.

Convey Complex Relationships:

-

Highlight Patterns: Use visualizations to highlight patterns, trends, or relationships between themes that may not be immediately apparent from textual descriptions alone. Visual representations can help reveal underlying structures or dynamics within the data.

-

Compare and Contrast: Employ side-by-side comparisons or juxtapositions of themes to facilitate comparisons and contrasts. This allows your audience to discern similarities, differences, or variations across themes more easily.

Customize Visualizations:

-

Tailor to Audience Needs: Customize your visualizations to match your audience’s preferences and needs, considering factors like their familiarity with visual data and cultural backgrounds.

Integrate with Narrative:

-

Visual Integration for Impact: Incorporate visualizations seamlessly into the narrative to support and strengthen key points and themes. Ensure they enhance your narrative rather than detract from it.

-

Narrative Alignment: Ensure that the visualizations you choose align with the narrative structure and contribute meaningfully to the overall coherence and flow of your argument.

-

Add Contextual Information: Include explanations, labels, and notes with your visualizations to help your audience understand the meaning and importance of the theme.

Based on my experience, infographics are generally enhanced by visuals like icons, images, and pie charts, while detailed visuals such as scatterplots and thematic maps are better suited for reports. By turning your themes into visual representations, you make ideas easier to grasp and more engaging. Thoughtfully selected visuals add depth and interest to your themes, making them more dynamic and captivating for your audience.

Step 8: Conclude with Key Insights

Wrapping up your presentation of themes with a synthesis of key insights is important for solidifying understanding and emphasizing the significance of your findings.

-

Highlight Main Findings: Summarize the main themes and findings that emerged from your thematic analysis. Provide a concise overview of the key insights gleaned from your data and their relevance in addressing your evaluation questions and objectives.

-

Explore Practical Applications: Consider practical applications of your findings and how they can inform decision-making, policy development, or future programming.

-

Identify potential areas for future evaluation: Discuss unresolved issues, areas needing further investigation, or opportunities for methodological improvement based on the insights from your thematic analysis.

Summarizing key insights from your thematic analysis wraps up your narrative with a clear understanding of your findings and their broader impact.

Conclusion:

Presenting themes from thematic analysis is not just about summarizing your findings but also about effectively communicating the richness and complexity of your data. By understanding your audience, organizing your themes thoughtfully, and providing context, interpretation, and visualizations, you can deliver a compelling presentation that showcases the depth of your research insights.