Uno de los roles de la función de evaluación es el desarrollo de pensamiento evaluativo a todos los niveles posibles.

I.) El pensamiento evaluativo (PE)

(1) es un enfoque disciplinado para la indagación y la práctica reflexiva que nos ayuda a emitir juicios sólidos, basados en evidencias, como un hábito.

(2) no es aplicable sólo en/a la evaluación (o unidades de evaluación) sino en toda la organización y fases de la gestión,

(3) tiene una dimensión de desarrollo de capacidades a nivel individual, organizacional y estructural

Thomas Archibald y su equipo han definido “Pensamiento evaluativo» de la siguiente manera:

(1) aplica el pensamiento crítico en el contexto de la evaluación,

(2) motivado por (a) una actitud de curiosidad y (b) una creencia en el valor de la evidencia, que

(3) implica (a) identificar supuestos, (b) plantear preguntas reflexivas, (c) buscar una comprensión más profunda

(4) por medio de (a) la reflexión y (b) la toma de perspectiva, e (c) informar las decisiones en preparación para la acción».

II.) Un enfoque de PE tiene como objetivo cambiar la actitud (motivación, apropiación y comprensión), la aptitud (capacidades, medios y habilidades) e incentivos (oportunidades) en relación a (1) el pensamiento evaluativo en general y (2) la utilidad de la evaluación para la organización, siendo una herramienta que podría ser integrada en las tareas de todos los miembros de la organización.

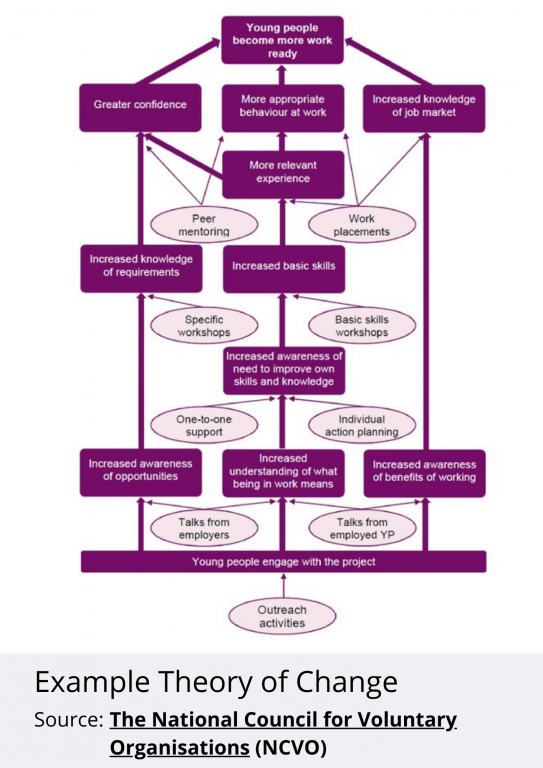

Algunas formas en que el pensamiento evaluativo se relaciona con la transformación de la evaluación para evaluar la transformación: (1) Liderazgo descentralizado, (2) gestión del conocimiento, (3) pensamiento de sistemas, (4) construcción colectiva.

- Democratiza y descentraliza la investigación evaluativa.

- Aprovecha la sabiduría práctica y una pluralidad de formas de conocimiento y razonamiento. .

- Es el pensamiento de sistemas y equidad.

- Equilibra la intuición y la racionalidad

III.) ¿Cómo asegurar un pensamiento evaluativo continuo en las organizaciones? Al ser el PE una disciplina, necesita de un enfoque específico de refuerzo de capacidades en toda la organización (no solo en las unidades de evaluación) sobre las disciplinas requeridas:

1.Refuerzo del liderazgo y motivaciones para el PE: Asegurar un liderazgo efectivo (centralizado y descentralizado) y desarrollar un cultura del aprendizaje (aprendizaje legitimado e incentivado)

2.Refuerzo de las capacidades y medios para el PE:

2.1.Desarrollo de capacidades que den oportunidad para desarrollar:

- La forma de «saber, conocer, plantear y priorizar» necesidades de información, conocimiento, aprendizaje y preguntas a responder (preguntas evaluativas) de la organización,

- La forma de buscar respuestas y la forma de utilizar las evidencias existentes,

- Fortaleciendo de la calidad del proceso, de las pregunta y evidencias existentes,

- Crear espacios y tiempos para el PE: (1) (in)formal, (2) individual /colectivo

2.2.Algunos conductores podrían ser: (1) el PE como objetivo organizacional, (2) integrar explícitamente el PE dentro del ciclo de planificación, seguimiento y evaluación, (3) incluir la demanda de PE como requisito en los procesos de contratación, Términos de Referencia, medición de desempeño, (4) incluir el refuerzo del PE como objetivo de las evaluaciones.

3.Refuerzo de los incentivos: (1) crear marcos de rendición de cuentas individuales y organizacionales para el PE (2) fomentar relaciones de confianza, transparencia, compartir, (4) invertir en infraestructura para el uso y la gestión del conocimiento