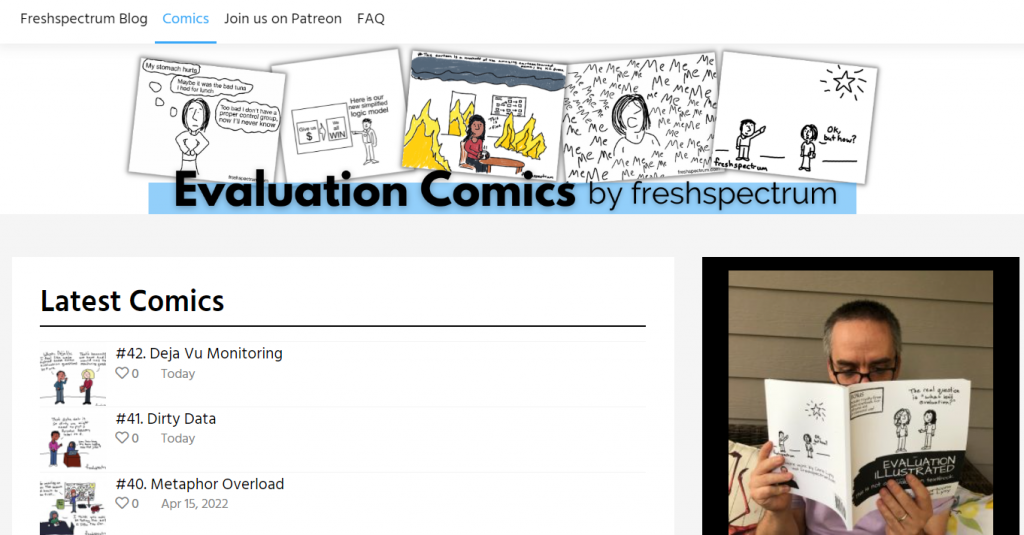

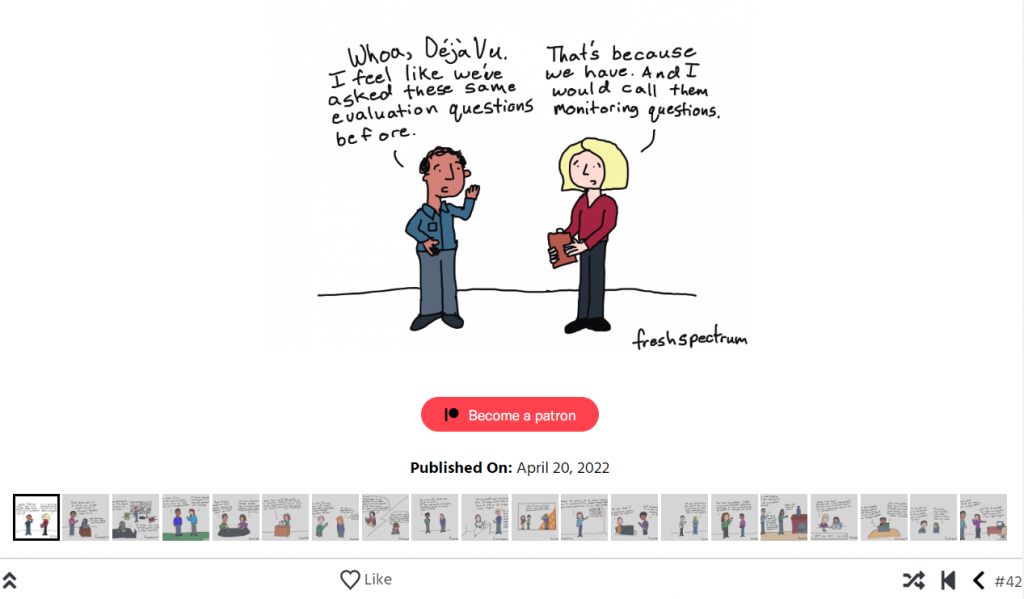

Big news! I have an evaluation comics page now.

So what does that actually mean?

Over the last decade my comics used to live almost entirely within blog posts.

For most of my cartooning life I’ve treated my cartooning as a form of blog post illustration. My cartoons were illustrations of ideas that were found within my blog posts.

For years, just about every blog post would be published with a handful of cartoons. Those cartoons would then spread through social media.

And that used to work just fine.

Why the change?

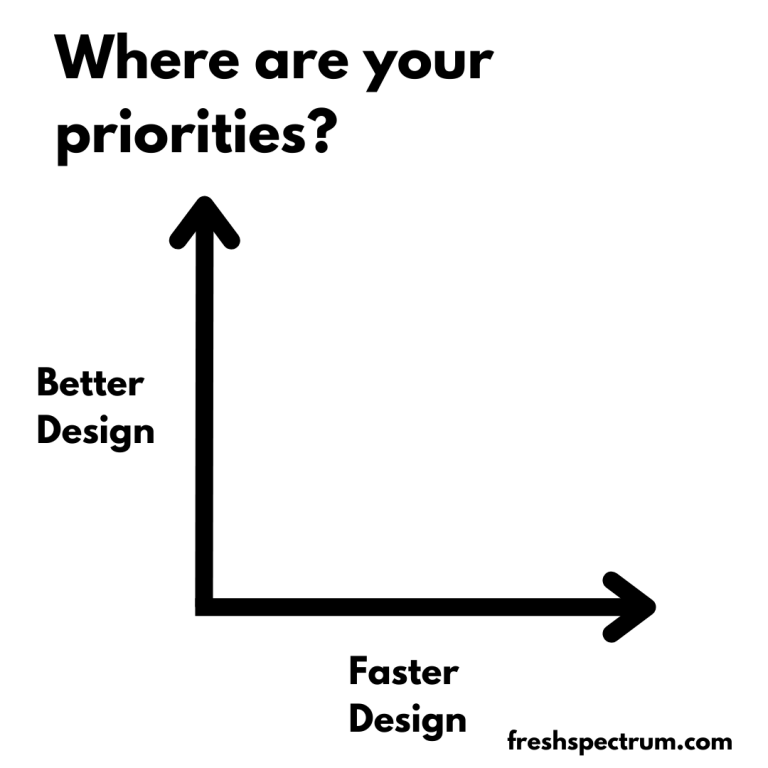

Because lately my professional life (and this blog) have focused more and more on information design. And my plans for the future involve lots of templates and tutorials.

Templates and tutorials are harder to illustrate with comics. And honestly, only cartooning what I blog limits what I cartoon.

So I’ve decided to give my cartooning a bit of space.

Will all the cartoons show up on the comics page?

Short answer, no.

I plan to post my cartoons first for my Patrons (you can always join us on Patreon). Some cartoons will stay as Patreon exclusives, but most will go to the comics page.

AS for the archives, right now I just have 2022 in there. I plan to back publish my archives. But since I’ve drawn hundreds of cartoons it may take a little while.

Until then, the best way to see all of my cartoons is by becoming a Patron where you’ll get access to my private Dropbox folder.

Will this mean more cartoons?

Yes.

I’ve changed my process, and it’s re-opened the cartoon floodgates. So be prepared for lots more cartoons in the future (even if you choose not to join us in the Patreon community and just stay a public fan).

How do I get there directly?

You can click the menu link on the freshspectrum homepage.

OR, just type evaluationcomics.com into your browser.

Hope you enjoy!