I’ve had many experiences collaborating with other evaluators, colleagues, or program managers starting a new evaluation. Questions fly like “Which evaluation framework will we use?”, “What will our approach be?”, “What is the evaluation plan?”. And often, down in the weeds, we question things like “What are the goals of this project?”, “Is the project achieving objectives?”, “Are there project aims?”.

That’s a lot of jargon! What do all these terms mean?

My personal solution to this problem is to be very clear upfront: I am terminology agnostic. I hold no allegiance to any specific term.

Some people will argue to the death that a goal is a broader concept than an aim, but I guarantee you will meet someone who believes equally as strongly that an aim is overarching with goals nested underneath.

You will meet people who believe adamantly that an evaluation framework is a validated and tested methodology that guides your approach to a specific evaluation. But you’ll meet others who call their own evaluation plans a framework. Likely in reading this you’ve already identified what side of these debates you fall on.

For me, no one is wrong. I chose long ago not to have etched-in-stone definitions, but to be fluid to the clients I work with and other evaluators.

Being agnostic does not mean these definitions don’t matter. They do! It just means I take time to ensure I am on the same page as everyone else in the room so that when we say “goal” we all know what that means and when we say “plan” we all know what that is (and what document to open!).

Being agnostic also doesn’t mean I don’t have my own preferences, and if given the opportunity I will certainly use the language that makes the most sense to me.

Here are my back-pocket definitions for some of this confusing evaluation language.

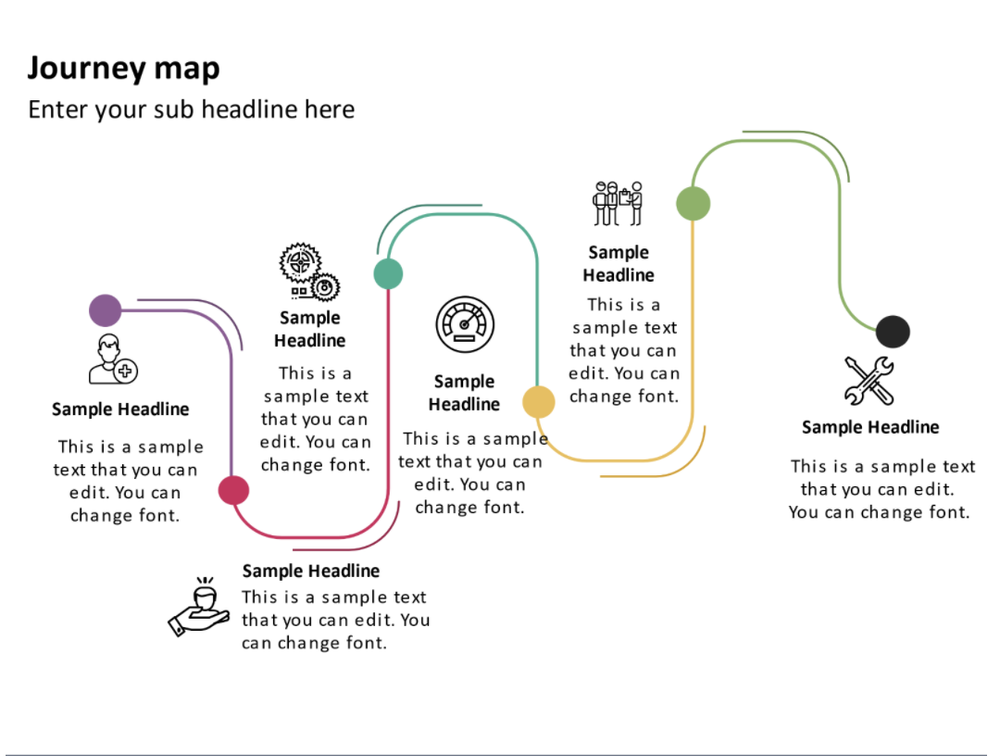

Framework. Plan. Approach. Model.

-

Evaluation Framework

-

This is usually a published methodology – things like RE-AIM, Kirkpatrick, or PRECEDE-PROCEED.

-

-

Evaluation Plan

-

This is the document I create, that plans out the evaluation for a given project or program. Check out our template for what goes into creating the plan.

-

-

Evaluation approach

-

This is more around guiding principles. For me, I’ll describe approaches as “being utilization-focused”, or “participatory”. You might include things like “summative” or “formative”.

-

-

Model

-

For me, most of the language I use is covered with framework, plan, and approach so I don’t tend to use model, but I would align it most closely with Framework. In fact, PRECEDE-PROCEED is actually called a “model”.

-

So, when a program manager asks you to “create an evaluation framework”, the first step is confirming if what they mean is for you to create and document a plan that may use a specific evaluative framework.

When asked which model you may apply to evaluation, you may want to describe both an evaluation approach (e.g., developmental, utilization-focused) and which framework (if any) will be used and confirm that this answers the question.

The key is ensuring everyone is on the same page. Confirmation without assumption is critical.

Goal. Aim. Objective. Intended impact. Outcome. Target. Benchmark.

A second grey area, and perhaps an even more common one, is differentiating goals from aims from objectives, etc. For me, these terms are much more interchangeable than the Framework/Plan discussion. Again, the key here is being clear about what terms you use, what they mean and, if relevant, how they link together.

You may agree that there is some grey area here, but that all the terms I listed are not synonymous. I agree. I do think that targets and benchmarks stand a little apart.

Targets and benchmarks have quantitative metrics associated with them: e.g., “We aim to serve 700 clients in this fiscal year.” Still valid to evaluate, but perhaps not the same as an outcome statement: e.g., “Participants will have increased confidence in accessing support services.”

You could also likely group some of these terms: goal/aim/objective and impact/outcome, for example. The common thread is that in some way each of these terms describes what the program does.

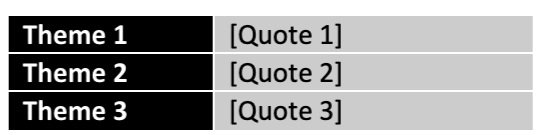

When I start evaluation planning, I’ll look for all of these terms hiding or disguised in:

-

Previous evaluation reports and recommendations

-

Strategic plans

-

Operational plans

-

Mission and vision statements

-

Core values

-

Guiding principles

-

Funder requirements/mandates

I try to align my language to what the program stakeholders use. My goal is to determine what the program is trying to achieve so that we can ask key evaluation questions that drive toward those goals (or outcomes, or objectives, or….).

What are your preferred terms and what do they mean to you?

At Eval Academy we’re working hard on an evaluation dictionary to help add some clarity to confusing evaluation jargon.

What terms have we missed? Comment on this article and let me know!

Sign up for our newsletter

We’ll let you know about our new content, and curate the best new evaluation resources from around the web!

We respect your privacy.

Thank you!