This article is rated as:

Most evaluators are hired to evaluate something: a program, grant-funded activities, a new approach. But what do you do if you get asked to build capacity in evaluation? That is, to help another team or organization do their own evaluation?

There are a few courses available (hint check out our Program Evaluation for Program Managers course!) but maybe they want an evaluation consultant’s help to design the process and tools in a way that can be sustained in-house. This was exactly the ask on a recent contract for me.

The Ask:

Help us evaluate our impact in a way that we can monitor, review and sustain on our own!

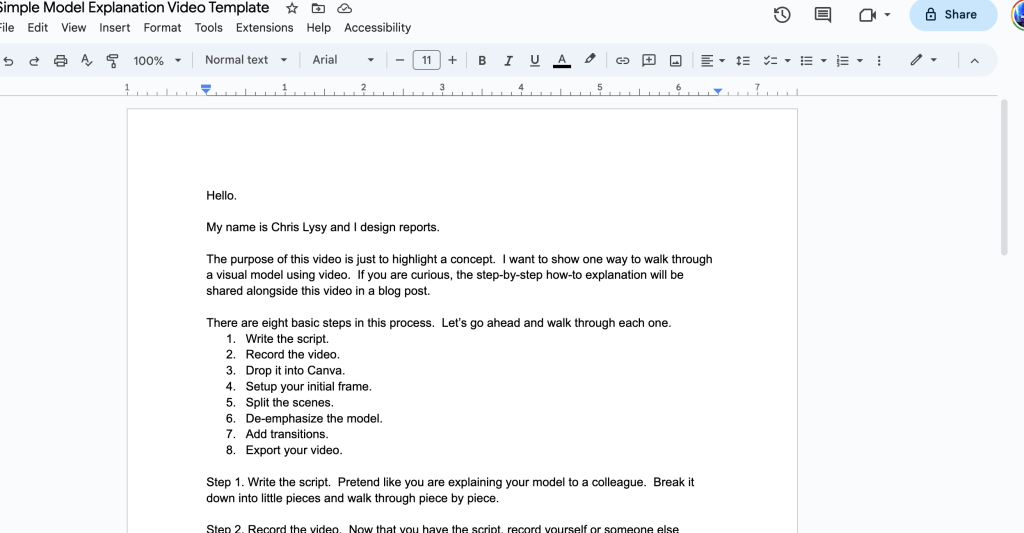

The start of this work wasn’t all that different from a program that I would evaluate. I sought to find out: “What do you want to know and why?”, and “How will you use it?”. I held the same kick-off meeting as I normally do (check out: The Art of Writing Evaluation Questions; Evaluation Kick-Off Meeting Agenda (Template); How to Kick Off Your Evaluation Kick-Off Meeting). I figured, at the end of the day, this was still about designing a program evaluation. The key difference was that instead of our team implementing the evaluation, we had to train their staff to do it: the data collection, the analysis and the resulting action.

After understanding what they wanted to be able to monitor by outlining key evaluation questions, we started working on a toolkit. We had a vision that a toolkit could be a one-stop shop for all things related to this evaluation – a place any member of the organization could reference to understand the process and learn how to do it. At the start of this journey, our proposed table of contents for this toolkit was quite vague and high-level, including sections like “What is the process?”, “Where do I find the data collection tools?”. But the more we field tested (more on this later), the more we kept building out what the toolkit included.

I gained a lot of learnings from this process. Here are some of my top takeaways:

Consent. As evaluators we can’t take for granted that others know about the informed consent process. Most staff at an organization probably don’t go about collecting personal information and experiences and likely haven’t thought about informed consent in a meaningful way. Therefore, a big part of our toolkit focused on defining consent: why it’s important and processes to obtain consent. We even shared some Eval Academy content: Consent Part 1: What is Informed Consent, Consent Part 2: Do I need to get consent? How do I do that?.

Confidentiality and anonymity: Part of consent covers whether or not obtained information will be kept confidential or anonymous. This raised another key learning: most staff don’t think about what this actually means or how it’s done. Often staff assume (correctly or incorrectly) that their organization has policies in place, and they wouldn’t be allowed to do things if it was unethical. This isn’t always true. We included in the toolkit some key information on what confidentiality and anonymity mean, and how they applied in their specific context. For more on this, check out our article Your information will be kept confidential: Confidentiality and Anonymity in Evaluation!

Interviewing skills. For their evaluation, the organization wanted to use volunteers with a range of backgrounds to do some client interviews. This triggered our team to figure out how to build capacity in interviewing. We came up with Tip Sheets for Interviewing, then created and recorded some mock interviews for training purposes. Because we wouldn’t be there to run the training, we wanted to make sure these volunteers were offered some direction, so we included a worksheet for the trainees to reflect on the recorded interviews as part of their training: Why was the interviewer asking that? Why was that wording used? What did the interviewer do when the interviewee said this…? etc. We provided materials on role clarity of an interviewer – not as a therapist, but as an empathetic listener, and ensured that the interviewer would have access to a list of community resources if needed. We also raised some awareness about vulnerable populations, about offered some preparation for scenarios that might occur with individuals who are feeling distress.

Analysis. Completing data collection is just part of an evaluation. We knew this organization didn’t have a lot of capacity or expertise to be diving into Excel spreadsheets, so we built them a dashboard. They could gather survey data in Excel and auto populate a dashboard at any time, that would visualize key learnings for them. We included a step-by-step instruction guide to help them out. We also wanted to make sure that the organization understood what it means to be the keeper of data, so we shared our Eval Academy data stewardship infographic.

Reporting and reflection. The dashboard was a good start, but we really wanted to support this organization to use the information they were gathering. Also, some data were qualitative and not well represented in the dashboard. We built a report template with headings that signalled where to find information that may answer their key questions. We also built a list of reflective questions that would help them to think about what their data showed and what potential actions were possible which you can access here: Questions to Get You Thinking about Your Data

This all sounds kind of straight forward, right? We thought about what a team needs to know about evaluation and built them those things. Not so! This entire process was iterative – more of a two-steps-forward-one-step-back kind of journey. With each new idea “Ah, they need to know about consent”, we’d learn of something else to add “Oh, they also need to know more about confidentiality”. To help with this process we did a lot of field testing.

We loosely followed a Plan Do Study Act quality improvement format. We’d get a staff member to test the process on 3 – 5 clients, we’d huddle and talk about what worked well, what didn’t and what unexpected things we encountered, then we tweaked and repeated. Eventually we landed in a spot that seemed to work well.

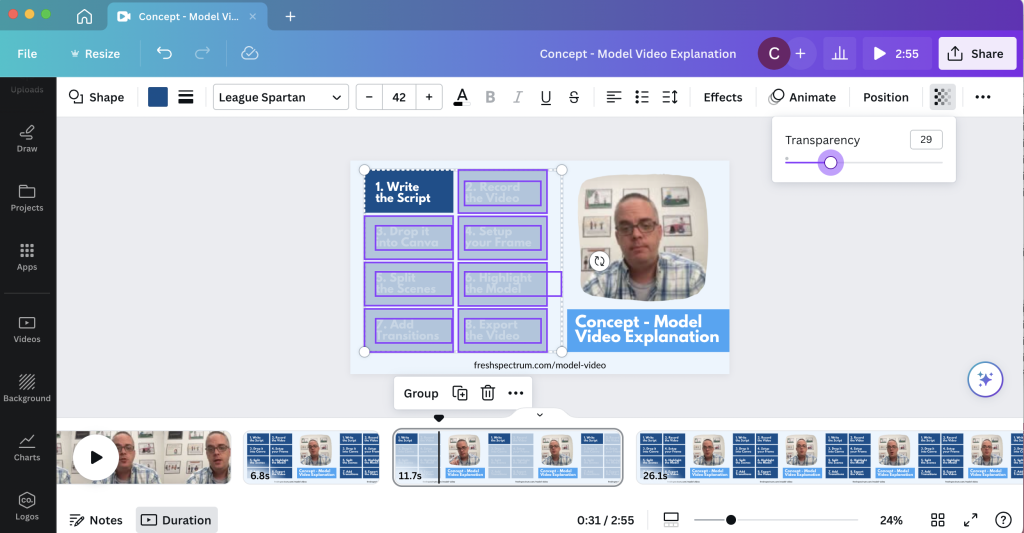

At the end of it all, the Toolkit (now with a capital T!) was pretty large, and we ended up breaking it up into three core sections.

-

Describing the process. Who does what when, what roles requirements exist for various roles, links to find data tools, and links to resources. We also included some email invitation templates and scripting for consent, and a tracking log.

-

Training. The second section focused on those niche skills that may come as second nature to a seasoned evaluator – this is where we included mock interview recordings, tip sheets, confidentiality and consent primers, when and if to disclose information, and how to be a good data steward.

-

Reporting. The final section described what to do with the information – the dashboard, the report, the reflective questions and a recommended timeline. We created step-by-step instructions for how to get data from an online survey platform into the dashboard and from the dashboard into the report.

This was a really different experience for me and I learned a lot about slowing down, explaining process, and not making assumptions. It’s strange not to follow-up to see how the process is working. We left them with the final recommendation that all evaluation processes should be reviewed – there is risk in going into auto-pilot. Evaluation processes are only worthy if they are answering key questions and providing actional insights. I think it was really insightful and good future planning for this organization to understand the value of evaluation and to want to learn more about it so they could do it on their own.

).

).